/ Cluster · How to research YouTube channels

How to research YouTube channels: the two-phase workflow

Most guides on how to research YouTube channels skip the question of which channels are worth researching in the first place. The result is a workflow that inspects channels the researcher already knows about — usually the largest channels in the niche — and produces precise descriptions of channels that already won. Useful channel research starts upstream of that, with discovery, and only then runs the per-channel inspection routine. This guide walks through both phases in order: Phase A surfaces a candidate cohort; Phase B inspects each candidate against a research-goal-specific rubric. Public YouTube Data API v3 metadata only. Built from 2,082 channels scanned to date.

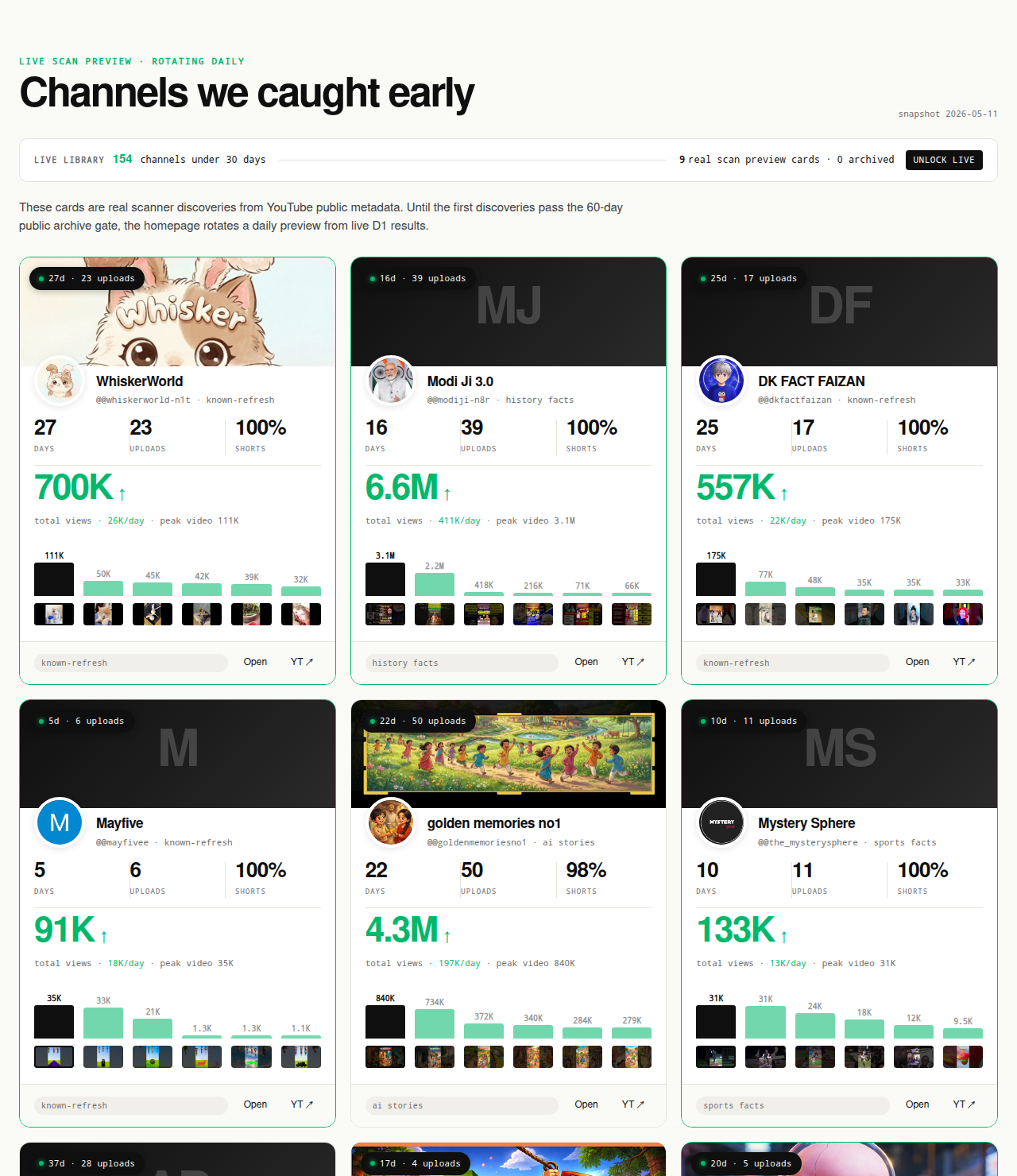

The Friday digest reveals three current breakout channels every week for free, each one a candidate the two-phase workflow on this page can be run against. The live 30-day window — dozens of channels under 30 days old right now — is the paid Phase A surface; the matured public archive opens as a second free surface in summer 2026 once the first cohort ages out of the live window.

Open the live library →

Why "how to research YouTube channels" is the wrong starting question

Most readers who type "how to research YouTube channels" into a search bar already have a channel in mind. They want a checklist they can run against that specific channel: what the About tab shows, what the uploads tab looks like, what the subscriber-to-view ratio is, what the cadence is. The expectation is that channel research is per-channel inspection, and the only missing piece is the inspection routine itself. That expectation is the most common reason channel research underperforms.

The hidden assumption is that the channel already in mind is the right channel to research. For almost every research goal, it is not. A creator picking a niche should be researching the small channels currently breaking out at the format-topic intersection, not the largest channel in the niche. An operator vetting a pitched collaboration should be researching a peer cohort at the same scale and format, so the partner's metrics have context. A researcher tracking a market should be tracking the cohort that is currently growing, not the established players.

The corrective is to treat channel research as a two-phase activity. Phase A is discovery: assembling a candidate cohort filtered on public-metadata signals, regardless of whether the researcher has heard of the channels in it. Phase B is inspection: running the per-channel routine against each candidate. Inspecting the wrong channels at high resolution produces precise descriptions of irrelevant channels. Inspecting the right channels at any resolution produces actionable research.

The parent YouTube channel research pillar argues the case for the two-phase frame; this page is the workflow itself, ordered. Sibling pages cover specific steps in more depth — how to find small YouTube channels for Phase A sourcing methods, YouTube competitor analysis for the competitor-scoped subset of Phase B, similar YouTube channel finder for the find-channels-like-X intent.

The two-phase workflow at a glance

The full workflow is two phases and nine steps. Phase A has three steps and runs once per research session. Phase B has five steps plus a final scoring pass and runs once per candidate channel. A typical session that targets 10 to 15 channels in a single niche spends 30 to 60 minutes in Phase A and 12 to 20 minutes per channel in Phase B, for a total of two to five hours depending on how much pre-filtering the Phase A inputs already do.

Phase A — Discovery (run once per session).

- Define the research goal: niche selection, format emulation, collaboration vetting, or competitive vetting. Each goal calls for a different candidate cohort.

- Build the candidate list. Source by format-cluster rather than topic. Use deterministic filters (channel age, first-5 sum, view velocity) instead of subscriber-count thresholds.

- Apply the deterministic discovery filter. Three hard gates plus two score bonuses, all readable from public Data API v3 fields alone.

Phase B — Inspection (run once per candidate channel).

- Read the public-data signature: channel age, subscriber count (with the rounding caveat), video count, total view count.

- Read the format fingerprint: Shorts ratio, video length distribution, upload cadence.

- Sample the recent uploads: titles, thumbnails, first three seconds. What patterns repeat.

- Cross-check against the saturation question: is the cluster still admitting new small channels.

- Score against your research goal. Different research goals call for different scoring rubrics.

The remaining nine sections of this guide walk through each step in order. Skip ahead to the phase that matches what you are stuck on, but do not skip Phase A entirely — that is the skipped-Phase-A mistake the previous section described.

Phase A, Step 1: define your research goal

Before pulling a single channel into the candidate list, write down the research goal in one sentence. Four goals show up most often, each with a different candidate-cohort requirement.

Niche selection. "I am deciding whether to enter this niche." The candidate cohort is the small channels currently breaking out across multiple candidate niches, compared on breakout density. The wrong cohort is "the biggest channels in each niche" — that surfaces niche size, not niche entry-friendliness, and the two are often inversely correlated at the small-channel layer.

Format emulation. "I have picked a niche and I am deciding which format to run inside it." The candidate cohort is small channels currently breaking out at the format-topic intersection — the ones whose format-fit hypothesis is being validated right now by the recommender. The wrong cohort is "the largest channels in the niche" — their current format is steady-state behavior, not the format-fit hypothesis that produced the breakout.

Collaboration vetting. "I have been pitched a collaboration and I am deciding whether this channel is a real working operation." The candidate cohort is the pitched channel plus a peer-cohort at the same age and view-velocity tier. The wrong cohort is "this channel in isolation" — a 50,000-view channel reads completely differently depending on whether its cohort median is 5,000 or 500,000.

Competitive vetting. "I run a channel and I am deciding which other channels are competing for my audience." The candidate cohort is channels operating at the same format-topic intersection as your channel, regardless of size. The wrong cohort is "channels I already know about" — that surfaces the competition you have noticed, not the competition you have missed.

Writing the goal down up front prevents the most common Phase A drift: starting to do collaboration vetting and ending up doing competitor research because the channel list pulled in adjacent channels. Each goal also calls for a slightly different scoring rubric in Phase B Step 5.

Phase A, Step 2: build the candidate list

The candidate list is the channels that will go through Phase B. The single most important decision in Step 2 is what to filter on. The dominant tool UX in this category is a subscriber-count slider, and that is the wrong primary filter. Subscriber count lags early traction by months; the public Data API rounds it to three significant figures (YouTube Data API: channels.list); and channels under 1,000 subs can hide the count entirely, dropping them from any subscriber-filtered result.

The corrective is to source by format-cluster rather than topic. Format-cluster sourcing means treating "vertical 60-second history shorts with TTS narration and cinematic visuals" as the unit of research, not "history." The format is the replicable part; topic saturates in weeks while the format keeps producing breakouts for months.

Four sourcing methods produce small-channel candidate cohorts. The full procedural walkthrough is on the sibling page how to find small YouTube channels; the abbreviated version:

- YouTube's own search with publish-date filter set to "This week" or "This month" and search type set to Channel. Free, partial coverage, slow.

- Niche-specific communities on Reddit, Discord, and X — operators post their own channels in these surfaces weeks before search indexes them.

- Third-party channel-finder tools: ChannelCrawler (closest to small-channel discovery), Social Blade rising-channels, vidIQ and NoxInfluencer (per-channel-inspection focused).

- Deterministic public-data discovery libraries: NicheBreakout, TubeLab, ChannelCrawler's velocity-filtered views. Pre-filtered cohorts using channel age and view velocity instead of subscriber count.

The target output for Step 2 is 10 to 30 candidate channels per niche. Fewer than 10 makes Phase B noisy; more than 30 burns Phase B time without improving the signal.

Phase A, Step 3: apply the deterministic discovery filter

Step 3 takes the candidate list assembled in Step 2 and applies a hard filter so what reaches Phase B is research-worthy. NicheBreakout applies three hard public-metadata gates to every candidate, then ranks the survivors with a deterministic score that weights two additional signals. The full methodology lives on the methodology page; the abbreviated version below is the signal list a researcher can apply with the public YouTube Data API directly, regardless of whether they are using our library or sourcing manually:

Channel age

detected within 45 days of channel creationFirst-5 upload views

combined views across the first five public uploads ≥ 10,000Views per day

lifetime channel views ÷ channel age ≥ 1,000Format clarity (bonus)

score weights channels with a clear Shorts-first or long-form-first ratio above mixed-format channelsEarly-traction velocity (bonus)

score boost when channel age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000

Each of the three hard gates isolates a different piece of the early-traction picture. Channel age ≤ 45 days filters discovery to channels where recommendation surfaces — not subscribers — are doing the audience-finding work, which is the cohort where format-fit signal is readable without subscriber-inertia confound. First-five-video sum views ≥ 10,000 filters out channels whose first uploads landed flat; five uploads sharing 10,000 views indicates a working content vehicle rather than a single lucky upload. Lifetime views per day ≥ 1,000 is the cleanest velocity check available from public metadata alone, because watch time and impressions live behind the YouTube Analytics API and cannot be third-party-verified.

The two score bonuses sharpen ranking inside the filtered set. Format clarity rewards channels with a consistent Shorts-first or long-form-first ratio; format-mixed channels are harder to classify, harder to copy, and slower to compound on the recommender side. Early-traction velocity (channel age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000) pushes the freshest, fastest-moving channels to the top of the ranking inside any niche.

Average first-five-video views for every populated grade tier inside our discoveries cohort looks like this (grades with no current members are suppressed until they fill in):

The three hard gates are research-goal-agnostic — they apply to niche-selection, format-emulation, and competitive-vetting research equally. Collaboration-vetting research relaxes the channel-age gate (a pitched collaborator can be at any age) but keeps the first-5 and view-velocity gates because those are the signals that distinguish a real working channel from a vanity channel at the same subscriber count.

Phase B, Step 1: read the channel's public-data signature

Phase B starts per-channel. Open the candidate channel's About tab, or call the channels.list endpoint with the channel ID. Capture four numbers into the research spreadsheet: channel age, subscriber count, video count, total view count. Everything in Phase B is interpreted against them.

Channel age. Today minus the channel's join date. Under 45 days is the cohort where format-fit signal is cleanest. 45 to 90 days is transitional. Over 90 days is downstream of recommender-trained audience momentum and should be interpreted as steady-state behavior, not as a format-fit hypothesis.

Subscriber count. Two caveats. The public Data API rounds subscriber counts down to three significant figures (a channel showing 14,500 might actually have 14,567); for any inspection that depends on small deltas, this matters. And channels can hide the count entirely — if the About tab shows no count, the field is unavailable, not zero.

Video count. Read off the Videos tab. Combined with channel age, this gives upload cadence. A 14-day-old channel with 28 videos publishes twice daily; a 90-day-old channel with 8 videos publishes roughly weekly.

Total view count. Combined with channel age, this gives lifetime views per day — the cleanest velocity signal in public metadata. Below 100 views/day suggests a channel publishing without finding an audience; 1,000 to 5,000 is the baseline breakout band; above 5,000 is the velocity-bonus tier that warrants moving the channel to the top of the inspection queue.

These four numbers take five minutes to capture and frame the rest of Phase B. The format fingerprint reads differently for a 14-day-old channel than for a 90-day-old channel, and that context comes from Step 1.

Phase B, Step 2: read the format fingerprint

The format fingerprint is the description of what the channel actually produces, computed deterministically from the public Videos tab. Three components.

Shorts ratio. Count videos under 60 seconds, divide by total video count. Above 80% Shorts is Shorts-first; below 20% is long-form-first; 20% to 80% is format-mixed. Format-mixed channels are harder to classify, harder to copy, and slower to compound on the recommender side because the audience profile is contradictory. The cleanest format-emulation targets sit at one extreme.

Video length distribution. Compute the median and range of durations. A channel running 45-second to 75-second Shorts uniformly is signaling a tight format; a channel running 30 seconds to 9 minutes is signaling format experimentation. Tight ranges produce more readable cohort comparisons.

Upload cadence. Read the publish dates of the first 10 to 20 uploads and compute the median inter-upload gap. A working channel typically locks a tight cadence (daily, every other day, twice a week) in the first 30 days. Channels that publish three uploads in week one and then nothing for two weeks teach the recommender an unstable channel profile, and the format-fit signal cools.

The format fingerprint takes three to five minutes per channel. Output: three numbers (Shorts ratio, median duration, median upload gap) plus a one-word format label.

Phase B, Step 3: sample the recent uploads

Step 3 leaves the numbers and reads the uploads themselves. Open the Videos tab, sort by most recent, and sample three to five videos from the last two weeks. For each video, read the title, look at the thumbnail, and watch the first three to five seconds. The question is not "is this video good." The question is "what patterns repeat across the sample."

Title patterns. Working small channels settle on a tight title template inside the first 10 to 20 uploads. The template might be "[NUMBER] [SUPERLATIVE] [TOPIC]" ("5 strangest battles in Roman history"), "[QUESTION]?" ("Did the Library of Alexandria really burn?"), or "POV: [SCENARIO]." A channel running every upload under a different pattern is still iterating; a channel running a locked template is past that iteration and is now testing topics inside the template.

Thumbnail patterns. Read off the visual template: dominant color, text presence, text size, face presence, composition. Channels currently breaking out usually have a consistent thumbnail style across their first 10 to 20 uploads. Thumbnails that drift between styles tell you the channel is still iterating on the click-through hypothesis.

First-three-second patterns. Write down the opening device: hook question, statement of stakes, jump-cut into action, voice-over over visual, text-on-screen reveal. A consistent opening device across the sample is a strong format-fit signal; an inconsistent opening is iteration noise.

Output: a one-line description of the title/thumbnail/opening template — for example, "POV-titled history shorts with red-text thumbnails and statement-of-stakes openings."

Phase B, Step 4: cross-check against the saturation question

Step 4 zooms out and asks a cohort-level question: is the format-topic intersection this channel operates in still admitting new small channels, or is it saturated? Format-emulation research most often fails here — the candidate channel is doing everything right, but the cluster is no longer a path for new entrants because too many channels are already running the same format on the same topics.

Channel-age distribution of the breakout cohort. If the channels currently breaking out at this format-topic intersection are all 60 to 90 days old with no 14-day or 30-day channels in the cohort, the cluster is admitting fewer new entrants. If the cohort has a wide age spread, including channels under 30 days old breaking out, the cluster is still open.

Topic-overlap rate. Open the candidate plus three to five peer channels and read the titles of the last 10 uploads on each. If every channel has videos about the same five or six topics, the cluster is in the saturation phase. If the topic lists are largely distinct, the cluster is still in the format-experimentation phase.

Per-upload view trend. Read the view counts on the last five uploads across the candidate and its peers. If recent uploads are systematically under-performing earlier uploads on the same channels, the cluster is cooling. If recent uploads are at or above the earlier baseline, the cluster is healthy.

Step 4 takes two to three minutes per cluster and produces a one-line verdict: cluster open, cluster closing, cluster saturated. A "saturated" verdict can disqualify a channel from format-emulation research even when every other Phase B step scores well.

Phase B, Step 5: score against your research goal

The final step ties Phase B back to the research goal from Phase A Step 1. Different goals call for different scoring rubrics.

Niche-fit scoring (niche-selection research). Score the cluster, not the individual channel. Variables: breakout density (how many cohort channels meet the three hard gates), age spread (whether the cohort includes channels under 30 days old), and Step 4's saturation verdict. The highest-scoring niche is the one to enter; individual channels are evidence inputs, not the decision target.

Emulation-fit scoring (format-emulation research). Score the channel-format combination. Variables: format clarity (Shorts ratio extremity, video-length tightness), title-and-thumbnail template stability, upload cadence regularity, and opening-device consistency. The highest-scoring channels are the ones to read the format off of. Topic is intentionally not in this rubric — emulation copies format, not topic.

Collab-fit scoring (collaboration vetting). Score against the peer cohort, not against an absolute threshold. Variables: peer-relative views/day, peer-relative first-5 sum, peer-relative upload cadence, and operator self-presentation (channel description coherence, About tab completeness, country and contact info filled in). A channel below cohort on every variable but pitched a collaboration is worth pausing on.

Competitive-fit scoring (competitive vetting). Score against your own channel. Variables: format-topic overlap (high overlap = direct competition), audience-acquisition velocity (a channel with higher views/day at lower age is acquiring audience faster than yours), and recommendation-surface overlap. The sibling page YouTube competitor analysis covers this rubric in depth.

The scoring rubric is the variable that most often goes implicit — researchers run Phase B steps 1 through 4 without writing down what they are scoring for, and end up with a row of metrics and no decision criterion.

What we deliberately don't research

The two-phase workflow above operates entirely on public YouTube Data API v3 fields. A specific set of metrics this workflow does not touch, by design: watch time, audience retention, click-through rate on impressions, average view duration, traffic source breakdown, audience demographics, subscriber geography, RPM, CPM, and revenue. Those all live behind the authenticated YouTube Analytics API, which Google restricts to channel owners and authenticated content partners (YouTube Data API: channels.list; the Analytics API documentation describes the authentication boundary). No third party — including us, vidIQ, Social Blade, NoxInfluencer, ChannelCrawler, or TubeBuddy — has access to those fields for a channel they do not own.

Any step that depends on watch time, retention, or RPM is not a public-data research step; it is either inference from public data with assumptions the researcher cannot audit, or fabrication. The Step 3 sampling reads opening-device patterns, not retention curves. The Step 5 scoring weights views/day and first-5 sum, not RPM-adjusted earnings. The workflow stays inside the public-metadata boundary because that is the only boundary where the same workflow can be run against any channel without owner cooperation.

The workflow also does not produce AI-generated channel narratives. The Step 3 opening-device read is descriptive, not causal — it says what the channel does, not why the recommender rewards it. The Step 5 scoring is deterministic against the variables in the rubric, not a synthesized prose narrative. Every variable is computable from public Data API fields a researcher can verify by clicking through to the channel page; if a claim cannot survive that outbound-link audit, it does not belong in the workflow.

Common channel-research mistakes

Six mistakes recur, each correctable with a discipline change rather than a tool change.

Researching the biggest channels in a niche. The largest channels are downstream of years of recommender-trained audience momentum; their current strategy is steady-state behavior, not the format-fit hypothesis a new entrant should copy. Filter for channels under 45 days old in Phase A Step 3, not "biggest channels in X" listicles.

Ignoring channel age. Researchers read view count and upload count without anchoring to channel age, then compare a 14-day-old channel's metrics to a 14-month-old channel's as if they were the same data. Channel age is the denominator everything else normalizes against. Capture it first.

Copying topic without copying format. A working channel is a format-topic intersection. A researcher who copies the topic list of a viral history-shorts channel but films it as a face-on-camera long-form documentary is running a different channel from the recommender's perspective and the early-traction signal flatlines. Read the format off the candidate in Phase B Step 2; topic saturates in weeks, format keeps producing breakouts for months.

Drawing private-metric conclusions from public data. "This channel's audience must love it because the retention is high" is a conclusion the public Data API cannot support — retention is not exposed. Phrase conclusions in terms of the variables the workflow actually measures: first-5 sum quartile, views/day threshold, title-template consistency. Those claims are auditable.

Skipping Phase A entirely. Jumping straight to Phase B Step 1 on a channel you already knew about produces a precise description of one channel and no comparative context. Run Phase A even when the goal is "audit this specific channel" — the peer cohort becomes the comparison baseline in Step 5.

Researching channels once and discarding the data. Keep a running spreadsheet with one row per candidate channel and columns for every Phase B variable. The spreadsheet compounds into a corpus of channel-level evidence downstream workflows can run against.

The clusters currently producing the most research-worthy channels in our scans

The two-phase workflow above is niche-agnostic — it tells you how to research channels regardless of which niche the candidates come from. The downstream question is which niches are currently producing the most research-worthy small channels, because that is where the workflow's effort-per-discovered-channel ratio is best. Across the dozens of channels currently in our live 30-day window (a subset of the broader 2,082-channel scan), the densest niche clusters currently meeting our sample-size threshold are:

This is what we have observed inside our scanned cohort, not a market-wide claim, and it shifts week over week as new format clusters surface and older ones saturate. Read it as a current snapshot. The Shorts-first vs long-form split inside those top clusters:

| Niche | Shorts-first % | Long-form-first % | Mixed % | Sample |

|---|---|---|---|---|

| Celebrity Trending News & Viral Moments | 100% | 0% | 0% | 10 |

The clusters that consistently produce the most research-worthy channels are usually faceless or Shorts-first formats because those formats have the lowest production cost per upload, which lets a single operator publish enough times inside the 45-day window to produce readable signal. Five recurring clusters have dedicated programmatic topic pages indexing the currently-breaking-out channels, each one a pre-filtered Phase A input the two-phase workflow can be run against:

- AI story channels: TTS narration plus AI imagery, Shorts-first publishing.

- Reddit story channels: TTS reading r/AmITheAsshole, r/ProRevenge threads with stock visuals or character overlay.

- History shorts channels: fact-stacking with cinematic visuals.

- Faceless storytelling channels: broader narrative format spanning fiction and non-fiction.

- Quiz channels: interactive Q&A format, often Shorts-first with text overlays.

Each programmatic page is a Phase A output a researcher can drop directly into Phase B without sourcing the cluster from scratch.

FAQ

How do I research a YouTube channel?

Run two phases in order. Phase A is discovery: decide what you are researching for (niche selection, format emulation, collaboration vetting, or competitive vetting), assemble a candidate channel list filtered on public-metadata signals (channel age, first-five-video sum, view velocity), and apply a deterministic filter so the cohort is not just channels you already know about. Phase B is inspection, run per-channel: read the channel's public-data signature (age, subscribers, video count, total views), read the format fingerprint (Shorts ratio, video length distribution, upload cadence), sample recent uploads for repeating patterns, cross-check the niche saturation question, and score against the specific research goal. The full walkthrough — both phases, every step — is the body of this guide.

What data can I get on someone else's YouTube channel?

Through the public YouTube Data API v3 channels.list endpoint: channel ID, channel title, channel description, channel creation date, country (if set), custom URL (if set), subscriber count (rounded down to three significant figures by the API, hidden if the channel has hidden subscribers), total view count, video count, upload playlist ID, channel banner, channel thumbnail, and per-video metadata including title, description, publish date, duration, view count, like count, comment count, and tags. Not available without authentication for a channel you do not own: watch time, audience retention, RPM, average view duration, traffic sources, subscriber demographics, geographic breakdown, click-through rate on impressions, and revenue. Those live behind the YouTube Analytics API and are restricted to channel owners. Any third party selling those metrics for a channel they do not own is either inferring from non-API sources or fabricating the number.

How do I find channels to research?

Filter on signals the recommender has not yet priced in: channel age, first-five-video performance, and lifetime view velocity. The deterministic version is the three-gate filter we apply in our own library — channel age ≤ 45 days, first-five-video sum views ≥ 10,000, lifetime views per day ≥ 1,000 — which surfaces the small-channel-breakout cohort rather than the established-channel cohort the autocomplete-driven tools surface. The sibling guide how to find small YouTube channels walks through four concrete sourcing methods (YouTube's own publish-date filter, niche communities, third-party tools, deterministic libraries). Starting from a list of channels you already know about — "the biggest finance channels," "the top history shorts channels" — biases the cohort toward channels that already won, which is the wrong target for almost every research goal.

What's the difference between channel research and competitor analysis?

Competitor analysis is one specific research goal — studying the existing players inside a niche you have already chosen — and it is a strict subset of channel research. Channel research is the broader activity that also includes research for niche selection ("is this niche worth entering?"), research for format emulation ("what format is currently producing breakouts I could run?"), and research for collaboration vetting ("is this channel a real working operation or a vanity project?"). Each goal calls for a different candidate cohort and a different scoring rubric. Competitor analysis runs against channels at the same format-topic intersection as a channel you have already committed to; niche-selection research runs across multiple candidate niches; emulation research targets small breakout channels, not large established competitors. The dedicated sibling page YouTube competitor analysis covers the competitor-scoped workflow; this page covers the broader frame.

How long does YouTube channel research take?

A single channel run through the full Phase B inspection workflow takes 12 to 20 minutes once the researcher knows the steps — five minutes on the public-data signature, three to five on the format fingerprint, three to five on a sample of recent uploads, two on the saturation cross-check, and a final scoring pass. Phase A — discovery — is where the time variance lives. A researcher with a pre-filtered cohort (NicheBreakout's library, ChannelCrawler's age-filtered views, a curated niche-community shortlist) can be in Phase B in minutes. A researcher sourcing from scratch through manual YouTube search, niche communities, and tool filters can spend two to four hours on Phase A alone. The honest budget for a research session targeting 10 to 15 channels in a single niche: half a day, mostly in Phase A; or 90 minutes total if Phase A is pre-done.

Can I research deleted YouTube channels?

Partially, and not through any tool that claims to recover live data. Once a YouTube channel is deleted, the YouTube Data API channels.list endpoint returns an empty item list for the requested channel ID — effectively a 404 — and the channel page on youtube.com returns "This channel does not exist." Third-party "deleted channel" research relies on historical scrapes: Wayback Machine snapshots of the channel page, Social Blade's archived metadata, archive.org captures, and occasionally Reddit threads where someone preserved a screenshot. If a channel was deleted recently and you have the channel ID or @handle, those archive sources will usually surface the last public snapshot. If the channel was deleted before any third party scraped it, the public record is gone. Deleted-channel research is out of scope for this workflow — every step below assumes the channel is currently live and queryable.

Is there a free tool for researching YouTube channels?

Several, at different layers of the workflow. For Phase A discovery: YouTube's own search has the publish-date and channel-type filters built in, ChannelCrawler's basic directory is free, Social Blade's rising-channels view is free, and NicheBreakout's Friday digest sends three current breakout channels every week with outbound YouTube links. For Phase B inspection: every public Data API field is accessible by clicking through to the channel's About tab or by calling the API with a free Google Cloud project (the default 10,000-unit daily quota covers thousands of channel lookups). vidIQ and TubeBuddy free tiers wrap the API with better UX for per-channel inspection. The paid surfaces in this category buy speed, cohort filters, and historical depth — they do not buy access to fields the API itself does not expose.

What public data should I prioritize when researching a channel?

In order: channel age, first-five-video view distribution, lifetime view velocity (total views ÷ channel age), upload cadence, and format consistency across the first 10 to 20 uploads. Subscriber count is a secondary signal at the small-channel layer because it lags early traction by months, is rounded to three significant figures by the API, and can be hidden by the channel owner. Watch time, retention, RPM, traffic sources, and audience demographics are not public for any channel you do not own — they sit behind the YouTube Analytics API and are not exposed to third parties. The five signals above are computable from public metadata alone and together describe whether a channel is currently breaking out, has already won and is steady-state, or is publishing without finding an audience.

Methodology / About this analysis

NicheBreakout's research relies entirely on YouTube Data API v3 public fields: channel age, subscriber count, video count, view count, video metadata, video publish dates, video duration, and recent video performance. The two-phase workflow on this page is the procedural readout of how to apply those public fields to channel research, ordered so Phase A discovery sits upstream of Phase B inspection. No private metrics (watch time, retention, RPM, audience demographics, traffic sources) appear in any step of the workflow or in any claim on this page.

Original-research artifacts in this article: the two-phase workflow framing, the four research-goal rubrics (niche selection, format emulation, collaboration vetting, competitive vetting), the deterministic three-gate Phase A filter, the five-step Phase B per-channel routine, the saturation-question cross-check, the six common-mistakes list, and the revealed channel cards above the fold. Cluster mix reflects what we have scanned, not all of YouTube. Author: Nicholas Major (Founder, NicheBreakout · Software engineer since 2011). Article last revised 2026-05-12.

Live scan freshness:

Related research

- YouTube channel research: parent pillar — the hub/argument page that frames discovery and inspection as two layers this workflow runs in order.

- YouTube channel finder: sibling cluster on the tool-category framing of Phase A discovery.

- Similar YouTube channel finder: sibling cluster on the "find channels like X" sub-intent that produces one kind of Phase A candidate list.

- YouTube competitor analysis: sibling cluster on the competitor-scoped subset of Phase B inspection, with the competitive-fit scoring rubric in depth.

- How to find small YouTube channels: sibling cluster on the Phase A sourcing methods (four-method comparison).

- YouTube niche finder: sister pillar covering niche selection upstream of channel research.

- Faceless YouTube niches: sister pillar covering the faceless production-mode angle.

- YouTube Shorts trends: sister pillar covering the Shorts-first publishing surface.

- YouTube outlier finder: sister pillar covering the breakout-discovery framing applied to any channel type.

- Most profitable YouTube niches: companion listicle backed by examples from the live cohort.

- How to do YouTube niche research: niche-selection workflow that consumes the candidate channels surfaced by this page.

- YouTube niche validation checklist: deterministic checklist version of the niche-research workflow.

- AI story channels, Reddit story channels, history shorts channels, faceless storytelling channels, and quiz channels: programmatic topic pages indexing currently-breaking-out channels by cluster — each one a Phase A output ready for Phase B inspection.

The Friday digest sends three current breakout channels every week — exactly the Phase A output the workflow on this page is designed to consume, pre-assembled. See pricing for the current paid library tier; subscribe to the digest free.

End of cluster

Skip Phase A — open the live library

Every channel in the library has cleared the same Phase A discovery filter the workflow above describes, so you can start at Phase B Step 1. Each card outbound-links to YouTube so the inspection routine can run against the public Data API fields directly.