/ Cluster · YouTube niche validation checklist

YouTube niche validation checklist: 12 deterministic checks, public data only

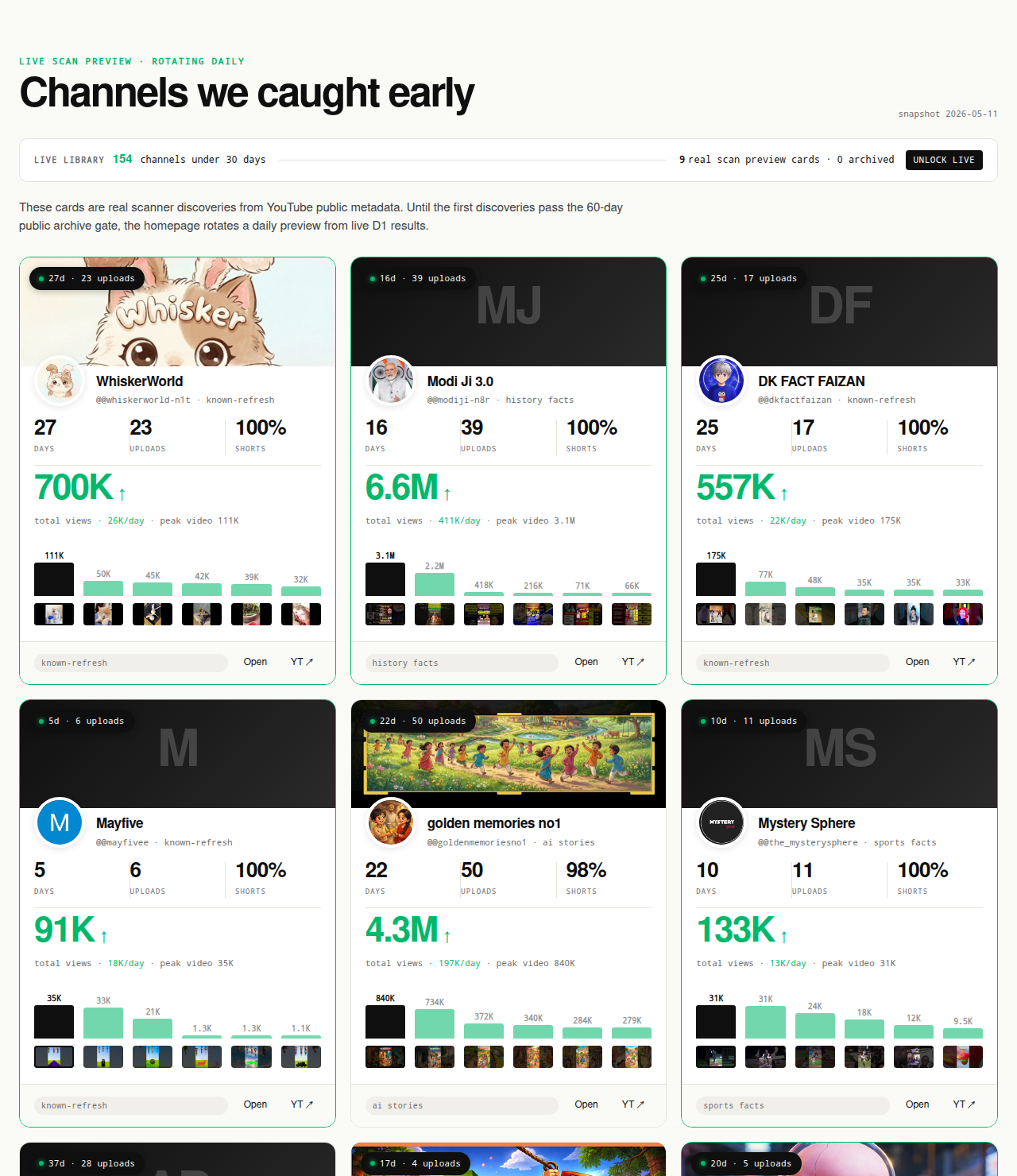

A YouTube niche validation pass should take twenty minutes per candidate niche, not three weeks. The version below is twelve yes/no checks resolved against public YouTube Data API v3 metadata — channel age, upload count, first-five-video view sum, views per day, format clarity. Pass every check and the niche is publishable; fail any check and the niche needs more research or a pivot. The page is the checklist. NicheBreakout's research base is 2,082 channels scanned to date, public metadata only, and the same flagging thresholds the live library applies are the thresholds the checklist enforces here.

The Friday digest reveals three current breakout channels every week for free. The live 30-day window — dozens of channels under 30 days old right now — is the paid workflow surface; the matured public archive opens as a second free surface in summer 2026 once the first cohort ages out.

Open the live library →

Why most niche validation is unfalsifiable

Open the first page of search results for "validate a YouTube niche" and the advice repeats: pick a niche you are passionate about, make sure there is an audience, check that you can produce content sustainably, confirm the niche aligns with your long-term goals. None of those tests can be failed. Passion is self-assigned; "an audience exists" is true for almost any niche; "you can produce sustainably" is a forecast about your own discipline; "long-term goals" are not a niche-level variable. A check a creator cannot fail does not validate anything — it is a vocabulary exercise that closes with the answer it opened with.

A falsifiable check has a public threshold behind it. "Do three small channels under 90 days old in this niche show first-5 sum views above 10,000?" is falsifiable — a researcher either finds them or does not. "Is the niche aligned with your passion?" is not falsifiable, because the answer is whatever the researcher reports about themselves. The structural condition the checklist holds itself to: every check must be answerable by reading public Data API v3 metadata or by making a falsifiable observation, and every check must close with a one-line pass/fail rubric.

The reframe fits how the YouTube recommender actually evaluates a new channel. The recommender does not read your passion. It reads your first-five-video click-through and watch-through against the format cohort it has assigned the channel to. The variables a public-metadata checklist can read are the closest public-data proxies for what the recommender will read when you publish.

How to use this checklist

Twenty minutes per candidate niche. Open the YouTube site in a fresh tab, work through the twelve checks below in order, record a yes or a no for each one, and stop the moment any check returns a no. The checklist is binary: all twelve must return yes for the niche to pass validation, and a single no is enough to fail the pass. If you fail, the next move is to either pivot the niche definition (usually toward a narrower format-topic intersection) or move to a different candidate niche entirely — not to spend more time trying to talk yourself into a yes on the failed check. Each check below closes with a pass/fail rubric in a single line; that is the only sentence in the section that determines the verdict. The prose above the rubric explains why the check exists; the rubric is the answer.

Check 1: Can you name three small channels under 90 days old breaking out in this niche right now?

The foundational check. A niche that cannot produce three current small breakouts is not a niche the recommender is currently lifting new entrants in, regardless of historical reputation or topic appeal. "Small" means under a few hundred thousand subscribers — channels still inside the recommender's discovery-phase audience-finding rather than coasting on subscriber-driven views. "Under 90 days old" means published-at within the last quarter; older breakouts validate a moment that may have already passed. "Breaking out" means concrete public traction (first-five-video sum views ≥ 10,000, lifetime views per day ≥ 1,000), not just "looks promising."

Three is the floor, not the target. One small breakout is anecdote. Two is coincidence inside a noisy distribution. Three is the first sample size where the pattern is plausibly a cluster rather than chance. If you can name five or seven without effort, the cluster is dense; if you struggle to name three, the cluster is thin and the niche fails validation regardless of how the remaining checks resolve.

The discipline of naming the channels forces specificity. A researcher who claims a niche is hot but cannot name three current small channels inside it is reasoning from a label, not from evidence.

Pass: three small channels under 90 days old with verifiable current breakout traction in the niche. Fail: fewer than three, or the named channels are over 90 days old, or "breakout" cannot be backed with public-data numbers.

Check 2: Do those three channels share a single format, or three different formats?

The three breakouts are not equivalent evidence depending on what they share. If all three are running the same format — same production mode, same approximate video length, same visual template — the niche has a clear format-cluster shape and a new entrant can credibly copy what is working. If the three are running three different formats, the topic label may be a real category to a human reader but is not a cluster to the recommender, which reads format-fit not topic-fit when classifying a new channel.

The format-vs-topic distinction is the most common point of confusion in niche research. "History" as a topic spans 8-minute documentary uploads, 45-second vertical fact-stacks, AI-narrated archival montages, and face-on-camera lecture formats. Each is a separate recommender cohort. A creator who copies the topic without copying the format is publishing into a recommender that has never seen their specific format-topic intersection from a small channel before.

If the three channels run three different formats, the corrective is not to abandon the niche — it is to narrow the niche definition to whichever format has the most evidence. "History" fails this check with three different-format breakouts; "history shorts under 60 seconds with cinematic visuals" might pass if all three use that specific format. The niche definition changes; the validation pass restarts from check one.

Pass: the three breakout channels share a recognizable single format. Fail: the three are running noticeably different formats, even if the topic label matches.

Check 3: Is at least one of the three under 30 days old?

A 30-day-old breakout is current evidence; a 75-day-old breakout is approaching the edge of the under-90-day window and may be reflecting a recommender lift that has already started to cool. The check forces at least one of the three named channels to be inside the freshest window, which is the strongest available public-data proxy for "the recommender is currently lifting new entrants in this niche right now," as distinct from "the recommender lifted new entrants two months ago."

The under-30-day window is also the window in which a new entrant publishing today would be evaluated by the recommender. A niche where the most recent small breakout is 75 days old is a niche where the most recent evidence of recommender lift predates the timeframe in which a fresh new channel would be receiving its own first-month evaluation. The temporal misalignment is the kind of subtle staleness that destroys niches that look fine on paper.

The check is also a saturation tripwire. Format clusters that are aging out tend to stop producing fresh sub-30-day breakouts before their parent topic stops being mentioned in listicle copy; the under-30-day requirement catches the saturation early in the public-data signal rather than late in the listicle cycle.

Pass: at least one of the three named breakout channels is under 30 days old. Fail: all three are 30 days old or older, even if all are still under 90 days.

Check 4: Does each channel pass the three hard gates?

This is the quantitative spine of the checklist. The three hard gates are the same gates the live library applies on every channel it surfaces. Each named breakout from check one must pass all three for the niche to clear this step. The gates and the score bonuses that sharpen ranking inside them are summarized here:

Channel age

detected within 45 days of channel creationFirst-5 upload views

combined views across the first five public uploads ≥ 10,000Views per day

lifetime channel views ÷ channel age ≥ 1,000Format clarity (bonus)

score weights channels with a clear Shorts-first or long-form-first ratio above mixed-format channelsEarly-traction velocity (bonus)

score boost when channel age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000

The three hard gates work because each captures a different piece of the early-traction picture. Channel age ≤ 45 days isolates the window where the recommender is doing the audience-finding work rather than the subscriber base. First-5 sum views ≥ 10,000 filters out channels where the first uploads landed flat — five uploads sharing 10,000 views is a working content vehicle, not a single fluke video. Lifetime views per day ≥ 1,000 is the cleanest velocity check available from public metadata, because watch time and impressions live behind the YouTube Analytics API and cannot be third-party-verified.

Compute each gate manually on each of the three breakout channels. Channel age comes from the About tab. First-5 sum is the sum of view counts on the channel's first five published videos, readable from the Videos tab sorted by oldest. Lifetime views per day is the total view count from About divided by the channel's age in days. Three channels times three gates is nine numbers to confirm; ninety seconds per channel; under five minutes total. Either each channel clears all three gates or the niche fails this check.

Pass: all three named channels clear all three hard gates (channel age ≤ 45 days, first-5 sum ≥ 10,000 views, lifetime views per day ≥ 1,000). Fail: any of the three channels fails any of the three gates.

Check 5: Is the format you'd run distinguishable from a recommender's perspective?

Format clarity is what the recommender reads. Shorts-first channels, long-form-first channels, and mixed-format channels are three separate cohorts; the recommender ranks each against its own audience model. A creator who plans to publish 60-second vertical Shorts on the same channel as 12-minute horizontal explainers is publishing into a mixed-format cohort, which in our scans produces slower first-five-video traction than format-consistent cohorts in the same topic.

The check forces the creator to commit to one of three production lanes before publishing: Shorts-first (≥ 80% Shorts by upload count), long-form-first (≤ 20% Shorts by upload count), or mixed (everything in between). The first two are recommender-friendly. The third is recommender-ambiguous and saturates harder against a clearer-format competitor.

If the three named breakouts are themselves all mixed-format, inspect them more carefully — usually the apparent mix is a transition (the channel pivoted and the recent uploads are all one format), in which case the format the channel is currently running is the format that is working.

Pass: your planned format is either Shorts-first (≥ 80%) or long-form-first (≤ 20% Shorts), and the three breakouts share that lane. Fail: your planned format is mixed, or the breakouts are genuinely format-mixed throughout rather than during a transition.

Check 6: Does the niche admit your operator constraints?

The breakout channels are doing specific things. The check asks whether you can do those specific things. If the three breakouts are running AI-narrated history shorts at four-uploads-per-week cadence with cinematic visual generation, the constraints are: AI-image-generation tooling, TTS narration, scripting at scale, four-uploads-per-week capacity. If you cannot run that production mode — because of time, tooling access, language, or a deliberate choice not to use AI-generated assets — the niche is not admitting your constraints, regardless of how strong the public-data signal looks.

Operator-niche mismatch is the most common reason validated niches still fail. A creator who cannot run the format the breakouts are running ends up publishing a different format inside the same topic, which the recommender treats as a different cohort, which means the breakout evidence does not apply to their actual channel.

Define your operator constraints honestly before running the checklist: daily production hours, tooling access (AI generation, voice talent, editing software, B-roll), language capabilities, tolerance for on-camera presence, appetite for sustained four-plus-uploads-per-week cadence over thirty consecutive uploads.

Pass: you can produce the format-topic intersection the breakouts are running, at their cadence, for thirty consecutive uploads, with the tooling you actually have access to today. Fail: any of those four conditions does not hold.

Check 7: Is the niche admitting new small channels, or only the largest channels?

A niche dominated by a small number of very large channels is not necessarily a niche the recommender is currently lifting new small entrants in. The check asks whether under-30-day channels still surface inside the niche, or whether the only channels currently moving are established creators with subscriber-driven view bases. The first is a niche that admits new entrants; the second is one that has already concentrated.

The asymmetry matters. Large established channels pull recommender shelf space toward themselves once the recommender has trained on their audience match. A new small channel competing for the same shelf space inside a concentrated niche has to clear a higher first-five-video bar than a new channel in a niche where the recommender is still actively audience-finding among small channels. The under-30-day public-data signal is the most reliable third-party-readable proxy for which of those two states the niche is in.

Pass: new channels under 30 days old are currently breaking out in the niche under the same public-data signals that flagged the three named channels. Fail: the only current activity is from channels over a year old or above several hundred thousand subscribers, with no fresh under-30-day breakouts visible.

Check 8: Can you commit to a single format-topic intersection for 30 uploads?

The operator gate. Niche validation only matters if the creator stays on the validated niche long enough to receive the recommender's verdict. The recommender needs a consistent format-topic signal across the first ten to twenty uploads to train its audience model; thirty uploads covers training plus the first audience-feedback loop. A creator who validates a niche and then drifts across formats inside the first fifteen uploads has not run the experiment the validation was setting up.

The drift problem is the practical failure mode behind most "I tried a niche and it didn't work" stories. The creator picks niche A, publishes seven uploads in format X, switches to format Y for eight uploads, then to topic B for five more, and concludes that "the niche didn't work." The recommender saw three different intersections and never trained on any one of them.

Force the commitment out loud before publishing. Pick the specific format-topic intersection the breakout channels are running, write it in a single sentence ("vertical Shorts under 60 seconds, AI-generated medieval-history fact-stacks with TTS narration, four uploads per week"), and commit to thirty uploads in that exact intersection.

Pass: you can name the format-topic intersection in a single sentence and commit to thirty consecutive uploads inside it. Fail: the intersection is not specific enough to write in one sentence, or the thirty-upload commitment is uncertain.

Check 9: Are the breakout channels using auditable production methods?

The integrity gate. Niche validation under public-data signals is only meaningful if the public-data signals reflect production methods that are auditable, reproducible, and policy-compliant. Three failure modes to screen against. First, breakouts whose traction depends on private-data tricks (subscriber-bought channels, view-buying, traffic manipulation) are not breakouts the recommender is naturally lifting. Second, breakouts using YouTube Terms of Service edge cases (mass-produced unoriginal content, undisclosed sponsorships, copyright reuse without licensing) are running tactics that may not survive the next policy update. Third, breakouts whose production methods are illegible from the outside are not reproducible inputs for a new entrant.

Operationalize the check by watching the most recent upload on each of the three breakout channels. Can you describe in a sentence what produced the video — TTS narration, AI image generation, screen-recording, voiceover-over-B-roll, face-on-camera with edited cuts? If yes for all three, the production methods are legible. The TOS-compliance side is worth a fifteen-second visual scan: AI-narrated content disclosed where required, copyright reuse limited to licensed or transformative use, sponsored content with disclosure where present.

Pass: production method on each of the three breakout channels is legible in one sentence, reproducible from publicly accessible tooling, and the channels appear to operate inside YouTube's current Terms of Service and content policies. Fail: any of those three conditions does not hold for any of the three channels.

Check 10: Does the niche's monetization layer match your monetization plan?

Niche is upstream of monetization but does not determine it. Two creators publishing the same format in the same niche routinely have several-multiple-times-different revenue results, and the variance is driven by what each sells alongside the channel — ad-share, sponsorships, owned product, affiliate — not by what each uploads. The check forces the monetization question to be asked separately from the niche question.

What monetization layer do the breakout channels in the niche appear to be running — and does it match the layer you plan to run? If they are running an owned-product funnel (a course in the channel description, a software product in pinned comments) and you have no comparable product, the niche is monetizing differently than you would. If they are running ad-share with high cadence in long-form, and you plan to run Shorts-first with no owned product, the monetization matches less well than the format match suggests. The full most profitable YouTube niches analysis covers why per-channel revenue is not third-party-readable; this check is the operator-side version of that boundary.

Pass: the monetization layer visible on the breakout channels is compatible with your plan, and the audience the niche attracts is plausibly an audience for your specific monetization layer. Fail: the breakouts are running a clearly different model than yours, or the audience is a poor fit for what you plan to sell.

Check 11: Have you confirmed the niche isn't already in your researched-and-rejected pile?

Niches that failed validation in a previous session should stay failed unless the underlying public-data picture has changed. The check exists to short-circuit the analysis loop where a creator runs the same niche through the checklist three times because each time the niche feels intuitively appealing and the previous failed-validation memory is fuzzy. The corrective is to keep a written list of niches you have validated and rejected, with the date and the specific check that failed, and consult it before running the checklist on any new candidate.

The list does not need to be elaborate. A single text file with a row per niche — date, niche label, failed check number, one-sentence note on why it failed — is enough. The discipline catches two failure modes: the cycling pattern where the same niche keeps reappearing because each session forgets the previous rejection, and the redefinition trap where a creator subtly relaxes the niche definition to make a previously failed niche pass on the second attempt.

Public-data pictures do change, and a niche that failed six months ago may legitimately pass today if the underlying breakout density has shifted. The check is not about permanent rejection; it is about explicit re-validation when revisiting a previously failed niche.

Pass: the niche is not on your researched-and-rejected list, or if it is, the underlying public-data picture has clearly changed since the previous rejection. Fail: the niche is on your list under the same definition with no change in the public-data picture, or you do not currently keep a researched-and-rejected list.

Check 12: Are you ready to publish before you over-research?

The publish gate. The point of validation is to clear the niche for publishing, not to extend the research phase indefinitely. A creator who passes the first eleven checks and then defers publishing for another two weeks of "more research" has converted the validation pass from a filter into a procrastination ritual.

Public-data signals are time-sensitive. A niche that passes validation today may not still pass three weeks from now, because format-cluster saturation is a live process that compounds inside short windows. The under-30-day requirement from check three is the freshness condition; a creator who waits three weeks after passing validation to publish their first upload is already on the edge of needing to re-validate.

Over-research often presents as preparation tasks that sound productive — better thumbnails, more script polish, fancier production setup. Those tasks compound across uploads inside the niche, not before the first upload. The recommender will not train on your audience model until you publish. The first upload exists to start the training run, not to be the perfected final form.

Operationalize the check as a single calendar commitment. If you have passed checks one through eleven, name the date your first upload inside the niche will go live. If the date is more than seven days from today, the validation pass has either not actually cleared or you are over-researching.

Pass: you have a specific calendar date inside the next seven days when your first upload inside the validated niche will go live. Fail: no specific date, or the date is more than seven days from today.

What we deliberately don't check

The checklist does not check passion, lifestyle alignment, or long-term career fit. A check that resolves against the researcher's own self-report is not a check; it is a ratification of whatever the researcher already believes. Every creator who runs the checklist will report passion alignment for the niche they are testing, because the same researcher is supplying both the question and the answer.

The checklist does not check claimed niche RPM, CPM, or average earnings. Per-channel revenue lives behind the YouTube Analytics API and YouTube AdSense, both authenticated and channel-owner-only; no third party can read another channel's revenue. The full boundary statement is in the most profitable YouTube niches analysis. The checklist holds itself to public Data API v3 fields and explicit operator-side decisions.

The checklist does not check audience demographics, viewer location, traffic source, watch time, retention curves, or click-through rate. Those fields are gated to the channel owner. A third-party "audience-demographic match" check would be inference, not evaluation. Check five captures what the recommender actually does — format-cluster fit against current breakouts — and that is the readable proxy.

The checklist does not check competitor count or "saturation" as a topic-level abstraction. Saturation lives at the format level. A topic widely described as crowded can still produce fresh sub-format breakouts; a topic that looks empty can be concentrated around a format the recommender is no longer lifting. The format-cluster checks (two, three, five, seven) capture saturation in a public-data-readable form.

FAQ

How long should YouTube niche validation take?

Twenty to thirty minutes per candidate niche. The checklist is twelve binary questions, each resolving against public Data API v3 fields or a simple observation from the YouTube site itself (channel about page, videos tab, individual video pages). One pass per niche, not iterative deepening. If a niche fails any single check, the next move is either a pivot on the format-topic intersection or a different niche entirely. Spending several hours on a single niche before publishing is the failure mode the checklist exists to prevent.

What if I fail one check?

The niche fails validation. The checklist is binary by design — all twelve must resolve to yes, or the niche is not publishable yet. Failing a single check is not a partial-credit situation; it is a signal that one specific condition is not currently met. Some failures point to a fixable input (you have not yet found three small breakout channels — keep looking, or reframe the niche more narrowly). Others point to a structural problem (the breakouts are all over 90 days old — the niche is not currently lifting new entrants). The check that failed tells you what to research next.

Can a niche pass validation but still fail?

Yes. Passing the checklist confirms the niche is currently producing small-channel breakouts under public-data signals and that your operator constraints match what those channels are doing. It does not guarantee your specific channel will replicate their results. Execution variables the checklist cannot read — script quality, thumbnail craft, publish-cadence discipline — still determine outcomes. Treat validation as a precondition for publishing, not as a prediction.

How often should I re-validate?

Re-validate before each major content commitment, not on a calendar. The relevant trigger is a decision to publish thirty or more uploads inside the niche. Re-validation mid-run is mostly distraction; the operator gate (check 8) is the point where the niche becomes a commitment. If you are pivoting between formats or considering a second channel, run the checklist fresh against the new candidate. Public-data signals shift week over week, so a niche that validated three months ago is not automatically valid today.

Do I need data tools for this?

No. Every check resolves against fields a researcher can read directly on YouTube without any third-party tool. Channel age, total view count, and channel creation date are on the channel About tab. Upload count is on the channel Videos tab. Individual video view counts and durations are on each video page. Format clarity is observable by scrolling the channel's recent uploads. Tools like NicheBreakout speed up the process at scale — when screening dozens of candidate niches rather than one — but the methodology is the same either way.

How does this differ from the niche-research workflow?

Different document type for the same underlying methodology. The YouTube niche research workflow is sequential: start at step one, finish at step N, the order matters. This checklist is binary: twelve yes/no questions, the order does not matter, and the result is a single pass/fail verdict. The workflow is the right reference when you are learning the process from scratch or running a niche-search session that goes from candidate generation to verdict. This checklist is the right reference when you already have a specific candidate niche and want to test it in twenty minutes. Both reference the same public-data signals and the same flagging thresholds; the difference is structural.

What's the most common failed check?

Check 1 (three small channels under 90 days old breaking out in the niche right now). Most candidate niches feel plausible until a researcher tries to name three current small breakouts, at which point either the only examples are channels over a year old (the niche may have worked once but is no longer lifting new entrants) or the examples have wildly different formats (the cluster is not a real cluster, just a topic label). Check 4 (the three hard gates) is the next most common failure — channels that look like breakouts often have first-5 sum views below 10,000 or lifetime views per day under 1,000 once you compute the numbers.

Should I use the checklist on niches I'm already running?

Only if you are considering pivoting away or adding a second format. Running the checklist against your own niche mid-commitment is mostly second-guessing; the checklist is designed for pre-commitment validation. The relevant ongoing question for an active channel is execution quality (script, thumbnail, cadence, sponsorship fit), not niche fit, which has already been answered by the act of publishing inside it.

Methodology / About this analysis

The checklist's twelve checks resolve against YouTube Data API v3 public fields: channel age, video count, view count, video metadata, video publish dates, video duration, and recent video performance. No YouTube Analytics API access (channel-owner-only), no AdSense data, no scraping of authenticated dashboards, and no AI-generated narratives. The three hard gates and two score bonuses on check four are the same the live library applies on every channel it surfaces; the full methodology is on the methodology page.

Original-research artifacts: the twelve-check structure, the format-vs-topic distinction, the operator-niche match framing, the publish gate at check twelve, and the explicit "what we deliberately don't check" boundary section. The framing reflects what we have observed across 2,082 scanned channels. Author: Nicholas Major (Founder, NicheBreakout · Software engineer since 2011). Article last revised 2026-05-12.

Live scan freshness:

Related research

- YouTube niche finder: parent pillar covering the broader niche-research category and the proof-based framing this checklist operationalizes.

- How to do YouTube niche research: sibling cluster page, the sequential workflow version of the same methodology — start there if you are learning the process from scratch, return here when you have a specific candidate niche to test.

- Faceless YouTube niches: sister pillar covering the faceless production angle for creators picking which format to run.

- YouTube Shorts trends: sister pillar covering the Shorts-first publishing angle and format-cluster trends.

- YouTube channel research: sister pillar covering the broader channel-discovery category, useful for sourcing the three breakout channels check one requires.

- YouTube outlier finder: sister pillar covering the breakout-discovery framing applied at the channel layer.

- Most profitable YouTube niches: sister cluster covering why per-channel revenue is not third-party-readable and what public-data signal does answer the niche-fitness question.

- How to find small YouTube channels: cross-pillar guide for sourcing the small under-90-day breakouts check one needs.

- AI story channels, Reddit story channels, history shorts channels, faceless storytelling channels, quiz channels: programmatic topic pages that pre-cluster current breakouts by format, useful as a starting point for the named-channels work in checks one through four.

The Friday digest sends three current breakout channels every week with format fingerprints and outbound YouTube links — three named channels per week, free, present-tense, suitable as raw inputs for the first four checks. The live library refreshes daily and surfaces channels currently inside the 30-day window across every niche cluster we scan. See pricing for the current tier; subscribe to the digest free.

End of checklist

Run the checklist against a current breakout cohort

Every channel card outbound-links to YouTube so you can audit the public metadata yourself. The live under-30-day library is the paid workflow surface — the same channels the checklist resolves against. The Friday digest is free.