/ Pillar · YouTube outlier finder

YouTube outlier finder: find outlier channels before outlier videos

A YouTube outlier is a video or channel whose performance sits far above the median of a comparable group — a video against its channel's own baseline, or a channel against its peer cohort. The dominant outlier-finder content on the SERP markets video-level detection (a video outperforming its channel's median, the framing vidIQ's Outliers tool uses). The harder and more useful problem is channel-level outlier detection: which small channels are outpacing their peer cohort right now, before any single video goes viral. NicheBreakout's research base is 2,082 channels scanned to date — public YouTube Data API v3 metadata only, no inferred private metrics.

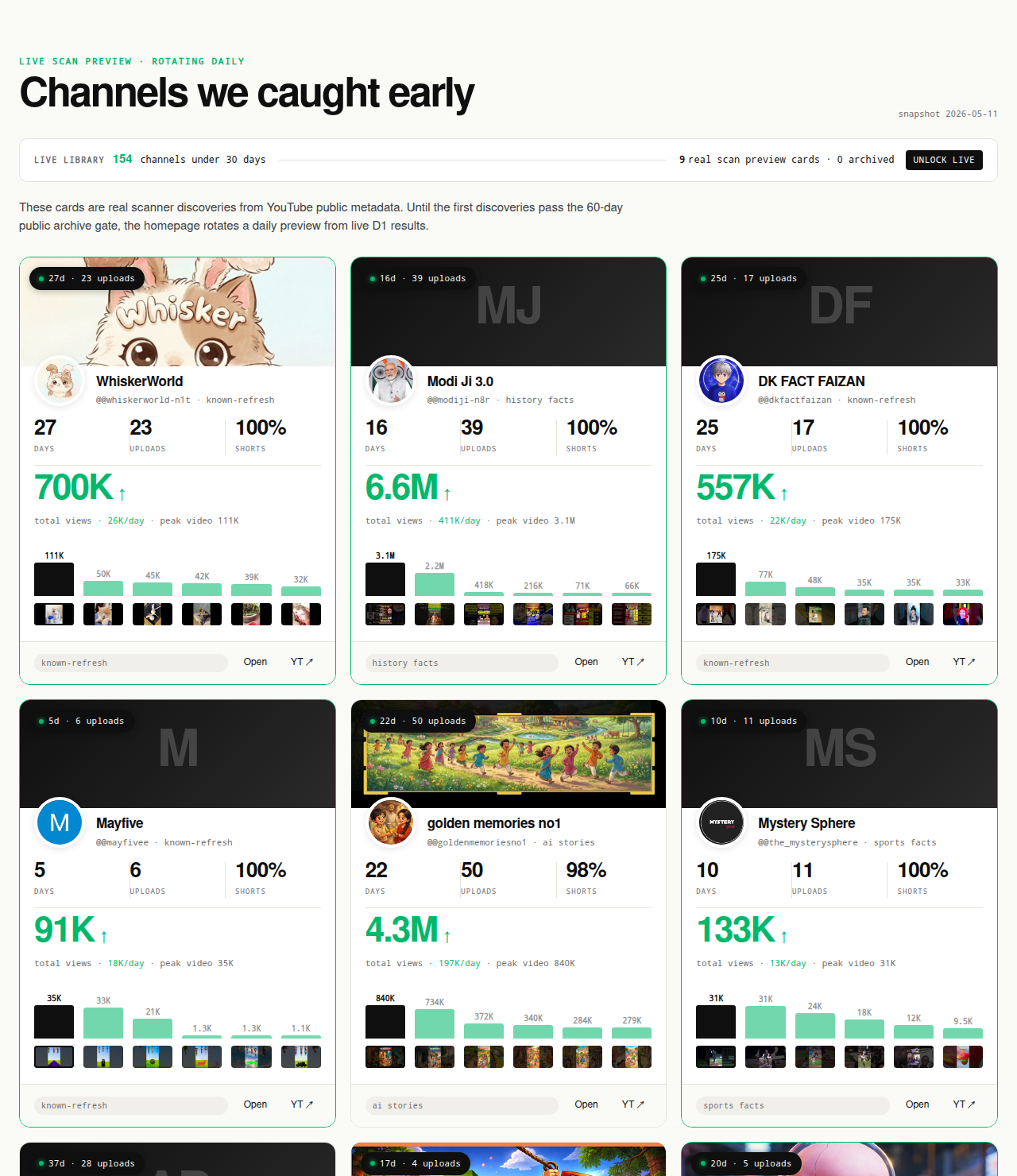

The Friday digest reveals three current channel-level outliers every week for free. The live 30-day window — dozens of channels under 30 days old right now — is the paid workflow surface; the matured public archive opens as a second free surface in summer 2026 once the first cohort ages out of the live window.

Open the live library →

What "YouTube outlier" actually means

"Outlier" is a statistical word, not a marketing one. In a distribution of values, an outlier is an observation that sits far enough from the median or mean that it is unlikely to have come from the same generating process as the rest. On YouTube the distribution depends on what you measure: video views inside a channel, channel views per day inside a peer cohort, first-five-video sums across newly created channels, Shorts ratio within a format cluster. Each distribution produces its own outliers, and conflating them is why most "outlier finder" content is unactionable.

The two distributions that matter for niche research are clean. Video outliers are videos whose view count sits far above the channel's own recent median — the framing built into vidIQ's Outliers tool, which scores each video against the channel's baseline and surfaces the multiples that broke out. Channel outliers are channels whose aggregate metrics (views per day, first-5 sum, age-adjusted view count) sit far above the median of their peer cohort — channels of similar age and format. Both are real outlier objects; they answer different questions.

Video outliers answer: which of this channel's recent uploads outperformed the channel's own baseline, and what can the channel's operator learn from them? That is a creator-tool question, useful once you already know the channel. Channel outliers answer: which channels are outperforming the peer-cohort median right now, regardless of which specific video carries the weight? That is a niche-research question, useful when you are deciding which channels to study at all. The pillar's argument across the next several sections is that the niche-research question is upstream of the creator-tool question — you cannot run a video-outlier scan on a channel you have not yet identified — and that channel-level outlier detection is the upstream tool that makes video-level outlier detection downstream-useful.

Treating "outlier" as a strict statistical concept also disciplines the methodology. A video that beats its channel's median by 3x is an outlier; one that beats it by 1.4x is not, even if 1.4x feels meaningful in a slow week. A channel that beats its peer cohort by 5x on views per day is an outlier; one that beats it by 1.2x is inside the noise band. NicheBreakout's flagging thresholds, published further down this page, are the cohort-comparison version of that strictness: hard gates first, score-based ranking second.

Why channel outliers matter more than video outliers for niche research

A single outlier video inside a 5M-subscriber channel tells you almost nothing about whether the niche is open for new entrants. The mature channel has two years of recommender-trained audience momentum, a subscriber base that will watch most uploads regardless of topic, and brand-driven click-through on thumbnails. When one of its videos outperforms the channel's own median by 4x, the cause is more likely to be a thumbnail experiment, a topical news event, or a guest collaboration than a niche-level signal a new creator could replicate. The video-outlier flag, in that case, is internally informative for the channel's operator and externally noisy for the niche researcher.

A 14-day-old channel outpacing its peer cohort by 5x on views per day tells you the opposite. The channel does not have a subscriber base lifting it; the recommender is doing the work, which means the format-topic intersection the channel is running is currently being matched to an audience. That signal is replicable for a new creator because the recommender-driven lift is mostly about format and topic, not about the operator's existing audience. Channel-level outlier detection isolates exactly that signal — abnormal traction without an audience moat to lean on.

vidIQ's Outliers tool is well-designed for the question it answers. It assumes the user already knows the channel and wants to learn from that channel's own breakout uploads. The downstream workflow is clean: pick a channel you respect, scan its recent videos against its own baseline, and study the videos that broke out. Where the workflow falls apart for niche research is in step zero — knowing which channels to scan. There is no "all of YouTube" view inside a video-outlier tool; the user has to bring the channel list. NicheBreakout's role is to build that channel list using channel-level outlier detection, after which video-level detection becomes the natural drill-down step inside each candidate.

The clean way to read the relationship is two-stage. Stage one is channel-level outlier discovery — which small channels are outpacing their peer cohort right now. Stage two is video-level outlier inspection — for each candidate channel, which of its recent uploads broke from the channel's own median, and what was different about them. Stage one is upstream and answerable from public Data API metadata at scale. Stage two is per-channel and works whether the user runs it through vidIQ's Outliers, NicheBreakout's channel deep-dive view, or manual inspection on YouTube directly.

How to define a channel outlier from public data alone

Channel outlier detection works from four observable inputs: channel age (computable from the channel's creation date), aggregate view count, video count, and per-video view counts. From those inputs a researcher can derive lifetime views per day, first-five-video sum views, upload cadence, and a Shorts ratio. The deeper inference layer — assigning channels to peer cohorts so the cohort median is meaningful — uses video metadata (title patterns, tags, duration distribution) to bucket channels by format. None of these signals require authenticated YouTube Analytics access, and none claim to read watch time, click-through rate, or revenue.

The constraint is structural. YouTube's public Data API v3 exposes channel age, subscriber count rounded to three significant figures, total view count, video count, and per-video metadata; what it does not expose is anything inside the YouTube Analytics API, which Google reserves for channel owners and authenticated content partners (YouTube Data API: channels.list). The boundary is the same one NicheBreakout's other pillars carry: a third party can compute aggregate public metrics, run them through a deterministic flagging methodology, and surface the channels whose aggregate profile is outlying. A third party cannot read why each viewer stayed.

Peer-cohort comparison is the part of channel-level outlier detection that creates the actual outlier signal. A channel publishing TTS-narration history shorts at 50,000 views per day is not informative on its own; it becomes informative when the peer cohort of similar-format, similar-age channels is publishing at 5,000 views per day on average. The channel is then 10x the cohort median — an outlier by any reasonable threshold. The cohort definition matters: cohorts that are too broad (all YouTube channels under 45 days old) average across format-fit differences that dominate the signal, while cohorts that are too narrow (the same exact subtopic) shrink to two or three channels and lose statistical power. NicheBreakout's cohort layer groups channels by format cluster — Shorts-first vs long-form-first, faceless production mode vs face-on-camera, primary niche tag — which keeps the comparison apples-to-apples.

The same boundary statement applies as on the other pillars: no claim about audience retention, no inferred watch time, no per-channel RPM. The point of channel-level outlier detection is to flag candidates worth studying further; what the researcher does with the flagged channels is downstream of the detection itself.

The deterministic filter that flags outlier channels

NicheBreakout's flagging methodology is the channel-level outlier detector in practice. A channel enters the live library when it passes three hard public-metadata gates, then ranks inside the library on a deterministic score that adds two bonuses. Read the gates as the binary outlier criteria (in or out of the candidate set) and the score as the ordering of the outliers within the set. The full methodology is published on the methodology page; the version below is the abbreviated readout framed for outlier discovery.

Channel age

detected within 45 days of channel creationFirst-5 upload views

combined views across the first five public uploads ≥ 10,000Views per day

lifetime channel views ÷ channel age ≥ 1,000Format clarity (bonus)

score weights channels with a clear Shorts-first or long-form-first ratio above mixed-format channelsEarly-traction velocity (bonus)

score boost when channel age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000

The three hard gates each isolate a different piece of the outlier signal. Channel age ≤ 45 days restricts the candidate pool to channels whose traction is recommender-driven rather than audience-driven; outlier detection on mature channels is dominated by subscriber-base effects that are not replicable for new entrants. First-5 sum views ≥ 10,000 separates channels with a working content vehicle from channels with one lucky video — five uploads sharing 10,000 views is the smallest sample size that survives single-video flukes while staying readable inside a working channel's first month. Views per day ≥ 1,000 is the cleanest velocity-based outlier check available from public metadata; it represents the channel's age-adjusted view count and is the variable that most directly maps to the cohort-median comparison.

The two score bonuses sharpen the outlier ranking inside the qualified set. Format-clarity bonus weights channels with an unambiguous Shorts-first or long-form-first format above format-mixed channels because the cohort comparison is more meaningful for format-consistent channels — a mixed-format channel is being evaluated against two different cohort medians and shows up weakly in both. Early-traction velocity bonus rewards channels at the extreme end of the outlier distribution: age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000 each indicate the channel is outpacing its peer cohort by an unusually large multiple. These are the channels worth surfacing first because the outlier strength is unambiguous.

Average first-five-video views for every populated grade tier inside our discoveries cohort looks like this (grades with no current members are suppressed until they fill in):

The exact score formula, grade thresholds, and edge cases — channels gaming the format-clarity signal by deleting non-conforming uploads, channels whose first-5 sum is dominated by a single viral video, channels with hidden subscriber counts — live on the methodology page. The how to find small YouTube channels companion guide walks through how to apply these signals manually for researchers building a private outlier workflow.

Video outlier detection: when it's the right tool

Video-level outlier detection — the framing built into vidIQ's Outliers tool — works by computing a multiple of each recent video's view count against the channel's own recent median, then ranking videos by that multiple. A 3x multiplier flags a video that meaningfully outperformed the channel's baseline. A 10x multiplier flags a clear standout. The tool is well-built for the question it answers: given a known channel, which uploads broke out, and what can the channel's operator (or a researcher studying that channel specifically) learn from them.

The right context for video-level outlier detection is downstream of channel-level discovery. Once a researcher has identified a candidate channel — through NicheBreakout's live library, the Friday digest, manual cohort comparison, or any other channel-discovery workflow — scanning that channel's recent uploads for video outliers is the natural drill-down step. The video-outlier scan answers: of this channel's last 20 uploads, which beat the channel's own median by the largest multiple, and what differentiated them? Format experiments, thumbnail experiments, length variations, and topic experiments all show up in the video-outlier ranking once the channel is fixed.

The workflow inversion works in reverse, too. A creator running their own channel uses video-level outlier detection to learn from their own breakout uploads — which thumbnail style, which title pattern, which hook approach hit hardest — and channel-level outlier detection becomes irrelevant because the channel is already chosen. This is the use case vidIQ's Outliers is most often deployed for, and the use case it is best at. The competitive product question — does NicheBreakout replace vidIQ's Outliers — has a clean answer: no, the two tools live at different layers of the same problem.

The mistake is using video-level outlier detection as the entire workflow when the goal is niche research. A researcher who scans the top 100 channels in a vertical for video outliers will surface lots of internally-interesting breakout videos but not many niche-research signals, because every video outlier inside a mature channel is being lifted by the channel's existing audience momentum. Channel-level outlier discovery upstream of video-level inspection is the workflow that produces actionable niche-research output. The reverse order produces a lot of data about videos and not much about niches.

Outlier ≠ viral ≠ breakout

Three words get used interchangeably in YouTube-research content and they mean different things. Viral describes absolute view-count magnitude — a video that reached an audience far larger than the creator's normal reach, usually outside the channel's subscriber base. The label is absolute, not relative, so a 5M-view video is viral regardless of whether the channel that published it normally clears 50,000 or 5M. Outlier describes relative magnitude against a baseline — a 200,000-view video on a channel that normally clears 5,000 is a video outlier even though 200,000 is not absolutely large, and a 10M-subscriber channel's 4M-view upload may not be a video outlier even though 4M is absolutely large. Breakout describes a channel-level trajectory — the entire channel is outpacing its peer cohort, not just one upload.

The differences are not pedantic; they map onto different research conclusions. A viral video on a mature channel tells you the channel ran one effective campaign. A video outlier on the same channel tells you something about the channel's experimentation — which formats, thumbnails, or hooks beat the channel's own baseline. A channel breakout tells you the format-topic intersection the channel is running is currently working at the recommender level, independent of the channel's existing audience. NicheBreakout's product is a breakout-channel discovery tool; the outlier framing in this pillar is the analytical lens that explains how channel-level breakout detection works statistically.

The three concepts also have different shelf lives. Viral videos are usually single events and don't repeat at the same scale; the next upload from a channel that hit a viral 5M-view video typically lands closer to the channel's baseline. Video outliers do repeat at the channel-experiment level — a channel that produces 3x-baseline videos consistently is running a working iteration loop. Channel breakouts have the longest shelf life when they map to a working format the recommender keeps lifting; that is the cohort NicheBreakout's matured public archive will preserve once the first wave of detected channels ages past the 60-day post-detection mark.

The framing matters for what kind of research question to ask the data. "Show me viral YouTube videos" returns a noisy mix of single events. "Show me video outliers inside this channel" returns the channel's experimentation log. "Show me breakout channels inside this niche" returns the niche-research candidates that justify deeper drill-downs. The fact that the SERP for "youtube outlier finder" mostly mixes these three together is the reason this section exists.

The outlier formats with active channel-level breakouts right now

Channel-level outliers in our 2026 scans cluster into a handful of format-topic intersections that keep producing breakouts inside the 45-day window. Read the list as observation, not as a ranking — there is no "best outlier niche," only formats whose peer-cohort comparison is currently producing outliers at the small-channel layer. Across the channels currently inside our live 30-day window — a subset of the broader 2,082-channel scan — the densest niche clusters meeting our sample-size threshold are:

The Shorts-first vs long-form split inside those top clusters looks like this in our dataset:

| Niche | Shorts-first % | Long-form-first % | Mixed % | Sample |

|---|---|---|---|---|

| Celebrity Trending News & Viral Moments | 100% | 0% | 0% | 10 |

Format clustering is the right unit of analysis for outlier detection because cohort medians are most meaningful inside a format cluster. A TTS-narration history-shorts channel compared against other TTS-narration history-shorts channels produces a clean outlier multiple; the same channel compared against all history channels (including long-form documentary channels) produces a noisy comparison dominated by format-fit variance. Format-cluster cohorts are how NicheBreakout's flagging stays apples-to-apples.

Separately from the live cluster snapshot above, we maintain dedicated programmatic topic pages for five recurring format clusters that produce channel-level outliers repeatedly in our scans:

- AI story channels: TTS narration plus AI imagery, recurring story templates, Shorts-first publishing.

- Reddit story channels: TTS reading r/AmITheAsshole, r/ProRevenge, r/MaliciousCompliance threads with stock visuals.

- History shorts channels: fact-stacking with cinematic visuals, vertical and horizontal variants.

- Faceless storytelling channels: broader narrative format spanning fiction and non-fiction.

- Quiz channels: interactive Q&A format, often Shorts-first with text overlays.

If the goal is to read outlier-density inside a specific format cluster rather than across the live cohort, those five programmatic pages are the entry points. The parent YouTube niche finder pillar covers the cross-cluster methodology, and the faceless YouTube niches sister pillar covers the production-mode angle most outlier-producing clusters fall under.

What we deliberately don't claim about outliers

NicheBreakout does not claim access to per-video swipe-away rate, per-channel watch time, per-niche RPM, audience retention curves, traffic-source breakdowns, or "outlier impressions." Those signals live behind authenticated endpoints — the YouTube Analytics API for owners and content partners, AdSense for revenue, internal recommender state for impressions and traffic sources. The official YouTube Data API v3 reference (YouTube Data API v3 reference) is the canonical list of what is exposed; anything outside that list is not third-party-readable. Any "outlier finder" advertising private metrics is either inferring them from non-API sources or fabricating them.

What is readable for any candidate outlier channel from public Data API fields: channel age, subscriber count (rounded to three significant figures), total view count, video count, video metadata, video publish dates, individual video view counts, and video duration. From those fields the channel-level outlier methodology computes views per day, first-5 sum views, Shorts ratio, upload cadence, and the cohort-comparison multiple. Every claim on this pillar is defensible from one of those fields, and every channel surfaced outbound-links to YouTube so the public metadata is verifiable on the source.

What is not readable: which of the channel's videos the recommender is currently lifting on which surface, what swipe-away rate the channel's recent Shorts had, what RPM the channel pays out, how many subscribers the channel actually has below the three-significant-figure rounding, which traffic sources are driving the channel's views, or how long viewers stay inside any given upload. None of those metrics ship in the live library, the Friday digest, or the future matured public archive, and none would survive the outbound-link verification rule that governs every channel card on the page.

The boundary statement matters specifically for the outlier framing because outlier-detection content elsewhere on the SERP routinely promises private metrics. "Find competitor watch time outliers" and "uncover hidden RPM outliers" are common headlines; both are selling things that do not exist as third-party-accessible products. The corrective is to define outlier inside the metrics that ARE public, run the methodology against those, and accept the resulting constraint as the product itself rather than a limitation.

Common mistakes when reading outlier signals

Six mistakes recur when researchers try to use outlier signals for niche decisions. Chasing viral one-offs instead of sustained channel outliers. A single viral video on a mature channel feels like a strong signal, but it is most often a one-event spike that does not repeat. The channel-level outlier — multiple uploads from a small channel beating the cohort median — is the durable signal. The corrective is to weight channel-level signals above single-video signals in any niche-research workflow.

Treating a 5M-subscriber channel's outlier video as relevant niche evidence. The video-outlier flag on a mature channel is internally informative for the channel's operator and externally noisy for the niche researcher. The cause of the outlier is more likely to be a thumbnail experiment, a topical news event, or a guest collaboration than a niche-level signal a new creator could replicate. The corrective is to filter outlier research to channels where the subscriber base is not large enough to dominate the lift — under 90 days old is a clean cutoff, under 45 days even cleaner.

Ignoring channel age. The outlier label means something very different on a 14-day-old channel than on a 14-month-old channel. New creators routinely study mature channels' current strategies and copy them, missing that the mature channel's current strategy is downstream of years of recommender-trained audience momentum. The corrective is to study outlier channels at the same age as the channel you are building. The YouTube niche validation checklist operationalizes the age-cohort discipline into a workflow.

Copying the topic instead of the format. A small-channel outlier breaking out on a specific topic invites the obvious mistake — publish on the same topic. The replicable variable is the format the outlier channel is running (production mode, video length, publish cadence, visual style), not the topic. Topics saturate in weeks; formats generalize across topics for months. The corrective is to read off the format from the outlier channel, then run a different topic inside that format. The faceless YouTube niches sister pillar covers the format-vs-topic split in production-mode detail.

Confusing one-week viral spikes with multi-week channel trajectories. A channel that posted one upload reaching 1M views in week one and nothing else of note is not a channel outlier; it is a video outlier on a channel that has not yet demonstrated sustained breakout. The methodology's first-5-sum gate is the corrective — five uploads sharing 10,000 views is a working content vehicle; one viral upload on its own is not.

Skipping the cohort comparison. Channel-level metrics in isolation are not outlier signals; they become outlier signals only relative to a peer cohort. A 50,000-views-per-day channel is impressive if the cohort median is 5,000 and unremarkable if the cohort median is 100,000. Researchers who study channel metrics without a cohort baseline end up calling every above-median channel an outlier, which is just the upper half of the distribution. The corrective is to bucket by format cluster (Shorts-first vs long-form, faceless vs face-on-camera, primary niche tag) before computing the outlier multiple, which is exactly what NicheBreakout's flagging methodology does internally.

FAQ

What's a YouTube outlier?

A YouTube outlier is a video or a channel whose performance sits far above the median of a comparable group. Two flavors matter for research:

- Video outliers — a single video that significantly outperforms the channel's own recent baseline (the framing vidIQ's Outliers tool uses).

- Channel outliers — a whole channel outperforming its peer cohort given its age, size, and format.

How does vidIQ Outliers compare to NicheBreakout?

vidIQ's Outliers tool ranks a channel's recent videos against that channel's own median to flag the videos most outperforming the baseline (vidIQ Outliers). It assumes you already know which channels to analyze. NicheBreakout solves the upstream problem: which channels are worth analyzing in the first place. The two are complementary, not substitutes — channel-level outlier discovery builds the candidate list, and a video-level outlier tool like vidIQ's helps drill into any one candidate.

What's the difference between an outlier and a viral video?

Viral describes a single video that hit a large absolute view count, usually outside its channel's normal audience. Outlier describes performance relative to a baseline — a 200,000-view video on a channel that normally clears 5,000 is a video outlier even though 200,000 is small in absolute terms. Viral is an absolute term; outlier is a relative term. A viral video on a 10M-subscriber channel may not even register as an outlier for that channel.

Can I find outlier channels for free?

Yes. The free tier today is the Friday digest — three current breakout channels every week, each outbound-linking to YouTube so you can verify the public metrics in one click. NicheBreakout's live 30-day library is the paid surface; the matured public archive opens as a second free surface in summer 2026 as the first cohort ages past the 60-day post-detection mark. Other free outlier-flavored research routes include YouTube's own search filtered to recent uploads plus manual peer comparison, but the workflow is slow without a tool.

How accurate is outlier detection from public data?

Channel-level outlier detection from public Data API v3 fields is auditable but not exhaustive — it sees what the API exposes (channel age, view count, video count, video publish dates, per-video views, video duration) and nothing private. Watch time, retention, click-through rate, swipe-away rate, and traffic source are not third-party-readable, so any outlier methodology claiming those signals is either using authenticated owner data or inferring. NicheBreakout's flagging stays inside the public fields; every channel card outbound-links to YouTube so the numbers are verifiable on the source.

Do outlier channels stay outliers?

Most don't, and that's part of the signal. A 14-day-old channel outpacing its peer cohort is informative because it shows the recommender is currently lifting the channel's format. Six months later the same channel has either stabilized into a category leader, drifted back toward the cohort median, or been overtaken by newer outliers in the same format cluster. The outlier label is a present-tense observation, not a permanent rank. Channels that stay outliers across multiple measurement windows usually do so by maintaining format consistency, not by repeating the trick that flagged them initially.

Can outliers be faked?

View counts can be inflated by paid traffic and bot traffic, but the public Data API fields used in channel-level outlier detection are harder to fake in aggregate than a single video's view count. Channel age, upload cadence, first-5 video performance, lifetime views per day, and Shorts ratio have to move together to fake a breakout channel — paid traffic on a single video doesn't move the cohort comparison meaningfully. The deeper anti-fake check is the outbound-link rule: every channel surfaced links to YouTube directly so a researcher can read the metrics on the source and spot anomalies (sudden subscriber drops, comment-section behavior, traffic-source tells that show up in the channel page itself).

Methodology / About this analysis

NicheBreakout's research relies entirely on YouTube Data API v3 public fields: channel age, subscriber count, video count, view count, video metadata, video publish dates, video duration, and recent video performance. The channel-level outlier observations on this page are derived from the same scan that powers the main live library — no separate dataset, no authenticated Analytics access, no inferred private metrics, no AI-generated narratives describing why specific channels broke out. Cohort medians are computed inside format clusters (Shorts-first vs long-form, faceless production mode vs face-on-camera, primary niche tag) so the outlier multiple stays apples-to-apples.

Original-research artifacts in this article: the two-flavor outlier taxonomy (video outliers vs channel outliers) in the opening section, the upstream-vs-downstream argument relative to vidIQ's Outliers tool, the outlier-vs-viral-vs-breakout distinction, the deterministic flagging methodology, the live niche-cluster snapshot, and the revealed channel cards above the fold. The outlier-producing format clusters discussed reflect what we've scanned, not all of YouTube. Author: Nicholas Major (Founder, NicheBreakout · Software engineer since 2011). Article last revised 2026-05-12.

Live scan freshness:

Related research

- YouTube niche finder: the parent pillar covering niche research across breakout-channel discovery and the broader niche-finder category.

- Faceless YouTube niches: sister pillar covering the production-mode angle that dominates most outlier-producing clusters.

- YouTube Shorts trends: sister pillar covering the Shorts-first publishing angle and the surface-split between Shorts feed and Browse.

- YouTube channel research: sister pillar covering the broader channel-discovery category that channel-level outlier detection sits inside.

- Most profitable YouTube niches: companion listicle backed by examples from the live cohort.

- How to do YouTube niche research: the full process guide downstream of the niche-finder pillar.

- YouTube niche validation checklist: the deterministic checklist version of the methodology.

- AI story channels: programmatic topic page tracking the AI-storytelling outlier cluster.

- Reddit story channels: programmatic topic page tracking the Reddit-narration outlier cluster.

- History shorts channels: programmatic topic page tracking the history-shorts outlier cluster.

- Faceless storytelling channels: programmatic topic page tracking the broader storytelling cluster.

- Quiz channels: programmatic topic page tracking the quiz/trivia cluster.

The Friday digest sends three current channel-level outliers every week with format fingerprints and outbound YouTube links — free, present-tense. The live library refreshes daily and surfaces channels currently inside the 30-day window. See pricing for the current tier; subscribe to the digest free.

End of pillar

Find the channel-level outliers worth studying today

Every channel card outbound-links to YouTube so you can audit the public metadata yourself. The live under-30-day library is the paid workflow; the Friday digest is free.