/ Cluster · YouTube channels before they blow up

YouTube channels before they blow up: the sub-30-day window where the public-data signature shows first

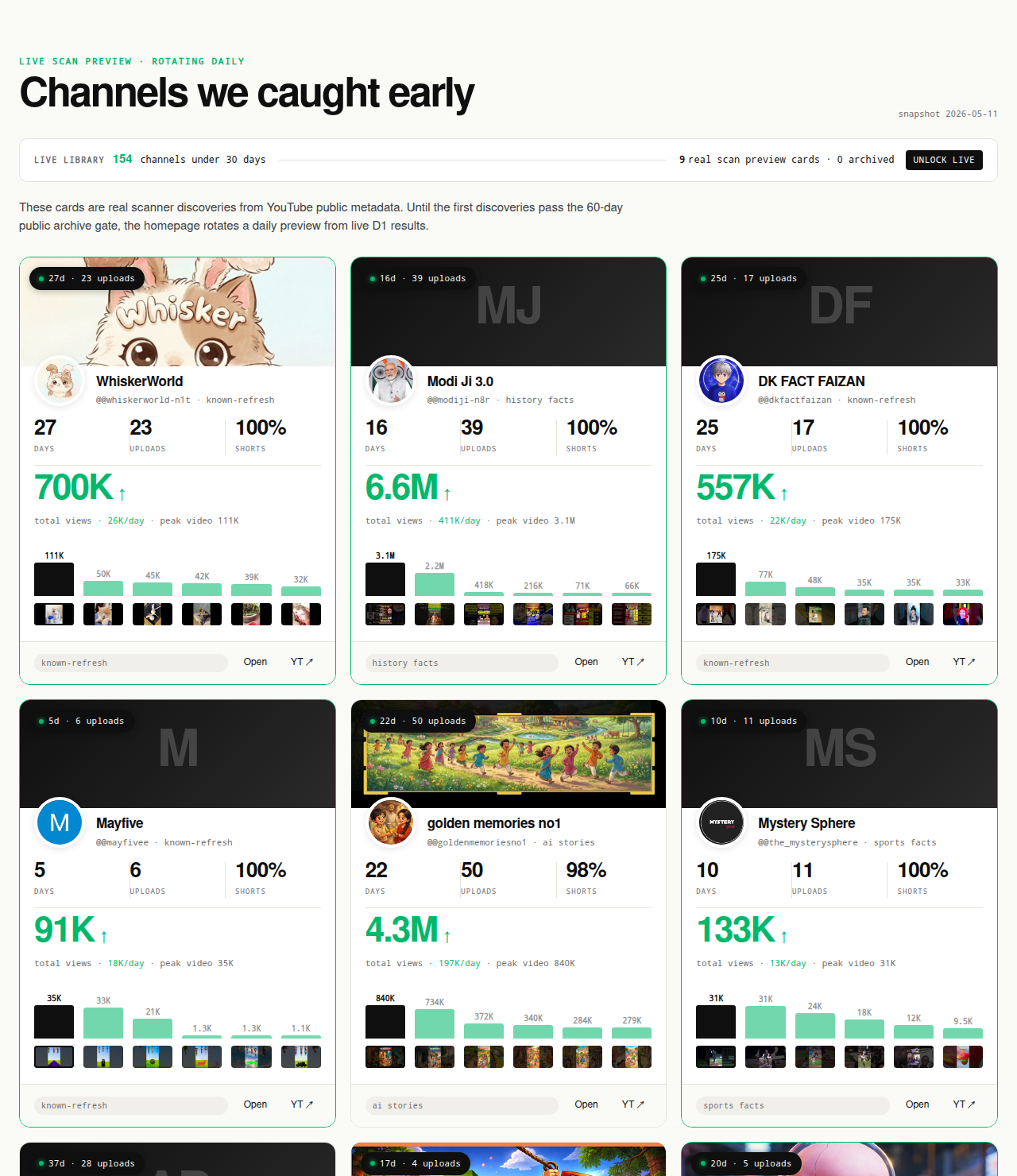

"YouTube channels before they blow up" is a speculative phrase, and the honest version of the page is that prediction is impossible — public YouTube Data API v3 metadata cannot tell you which specific sub-30-day channel will reach a million subscribers, because the variables that decide that question live outside what the API exposes. What public data can support is the observation that a specific sub-30-day channel currently shows the public-data signature consistent with channels that have gone on to break out. That observation is what NicheBreakout's live cohort under 30 days old surfaces by construction — the earliest-window slice where the recommender is lifting the channel but the broader audience has not yet priced it in. Research base: 2,082 channels scanned to date — public metadata only, no inferred private metrics, no AI-generated narratives, no prediction guarantees.

The Friday digest reveals three current sub-30-day YouTube channels every week for free, each outbound-linking to YouTube so the public metadata is verifiable in one click. The full live 30-day window — dozens of channels under 30 days old right now — is the paid workflow surface because the under-30-day window is the actionable read; the matured public archive opens as a second free surface in summer 2026 once the first cohort ages past the 60-day post-detection mark.

Open the live library →

What "before they blow up" actually means in public-data terms

"Before they blow up" gestures at a feeling — finding something early, ahead of the crowd — rather than at a measurable object. The version this page operates under is concrete and narrower: the channel's public-data signature is showing the early-traction pattern, but the channel has not yet accumulated the audience momentum that makes it obvious to the broader market. The first half says there is already a readable signal in public metadata; the second half says the channel has not yet been priced in by the audience layer that comes after the recommender — search results, external links, manual subscription, cross-channel discovery. The window between those two halves is the window this page is about.

The window is measurable. NicheBreakout's working definition is the under-30-day slice of the breakout cohort — channels currently less than 30 days from creation that have cleared the three hard gates (first-5 sum ≥ 10,000, views per day ≥ 1,000, channel age ≤ 45 days). Under 30 days is where the cohort comparison is cleanest because subscriber accumulation is still small enough that the recommender is doing nearly all of the lift; past 30 days the subscriber base starts contributing to early-window watch time, which then contributes to recommender lift in a self-reinforcing loop harder to disentangle from format-fit.

"Before they blow up" is descriptive of the window, not predictive of the outcome. A channel inside its under-30-day window may continue scaling, regress to the cohort median, or stop publishing entirely. All three outcomes happen in the data. What the page can claim is that the channel's public-data signature right now is consistent with the signature channels have shown when they have gone on to break out at scale. That is an observation about the present, not a forecast about the future, and the distinction is what keeps the page defensible.

The framing disciplines the evidence the page owes. A "before they blow up" claim comes with channel age in days, upload count, first-5 sum, lifetime views per day, the cohort-comparison multiple, and the under-30-day window verifier. Every channel card outbound-links to YouTube so a reader can verify the signature on the source. The card does not narrate why the channel will or will not keep scaling — the public-data signature plus the methodology that selected it is the explanation.

Why prediction is impossible but observation is useful

The blunt version: nobody can predict, from public YouTube Data API v3 metadata, which specific sub-30-day channel will reach a million subscribers. The variables that decide that question live mostly outside what the API exposes — the operator's posting cadence over the next sixty days, the title and thumbnail experiments, the recommender's evolving format weighting, audience-saturation dynamics inside the topic, copyright and policy events. Any tool advertising "predict which channels will blow up" is either inferring those variables from signals that do not support the inference or making the prediction up.

The useful frame is observation rather than prediction. Observation does not require a forecast about any specific channel; it requires a defensible read on what the public-data signature currently is. A 14-day-old channel publishing into a TTS-narration history-shorts cluster, with 14,600 views per day, a first-5 sum of 22,000 distributed across five uploads, and a 1.0 Shorts ratio, has a public-data signature consistent with channels that have gone on to break out at scale in the same cluster. The observation is verifiable on YouTube directly. Whether the specific channel will keep scaling is downstream of inputs the observation cannot read. The two claims are at different epistemic levels, and conflating them is the failure mode predictive "outlier finder" tools fall into.

The economic value of the observation comes from the cohort, not from any individual channel. A researcher who reads off the format from a pre-blow-up channel — production mode, length, cadence, visual template, hook style — and applies it to a different topic inside the same format cluster is using the channel as evidence about what the recommender is currently lifting at the small-channel layer. The format read generalizes across topics; the individual channel either keeps scaling or does not, but the cluster-level read remains informative even when individual channels regress. That is why the paid workflow surface is built around the live cohort under 30 days old rather than around any specific channel — the value is the population.

The observation is also time-limited. A pre-blow-up reading taken today is a different read than one taken three months ago, because format-fit is unstable inside YouTube's recommender system. Clusters that were lifting in February may have saturated by May; new clusters surface continuously. Real-time scanning is the only sourcing model that preserves the observation's freshness. The parent YouTube outlier finder pillar covers the statistical case for channel-level cohort comparison in more depth.

The deterministic filter for a pre-blow-up channel

NicheBreakout's flagging methodology is the deterministic filter the page uses to assemble the pre-blow-up cohort. A channel enters the live library when it passes three hard public-metadata gates, then ranks inside the library on a score that weights two bonuses. The under-30-day slice — the version this page surfaces — applies the same gates with a tighter age cutoff. The full methodology is on the methodology page; the abbreviated readout follows.

Channel age

detected within 45 days of channel creation; the under-30-day slice is the cleanest pre-inflection windowFirst-5 upload views

combined views across the first five public uploads ≥ 10,000Views per day

lifetime channel views ÷ channel age ≥ 1,000Format clarity (bonus)

score weights channels with a clear Shorts-first or long-form-first ratio above mixed-format channelsEarly-traction velocity (bonus)

score boost when channel age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000

The three hard gates each isolate a different piece of the pre-blow-up signature. Channel age ≤ 45 days, sub-30-day slice for this surface restricts the candidate pool to channels whose traction is recommender-driven rather than audience-driven. First-5 sum views ≥ 10,000 separates channels with a working content vehicle from channels with one lucky video — five uploads sharing 10,000 views is the smallest sample size that survives single-video flukes inside a working channel's first month. Views per day ≥ 1,000 is the cleanest velocity-based check available from public metadata; it represents the channel's age-adjusted view count and maps directly to the cohort-median comparison.

The two score bonuses sharpen the ranking. Format-clarity bonus weights channels with an unambiguous Shorts-first or long-form-first format because the cohort comparison is more meaningful for format-consistent channels — a mixed-format channel is being evaluated against two different medians and shows up weakly in both. Early-traction velocity bonus rewards channels at the extreme end: age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000 each indicate the channel is outpacing its peer cohort by an unusually large multiple before any single video has carried it.

Average first-five-video views for every populated grade tier inside our discoveries cohort looks like this (grades with no current members are suppressed until they fill in):

The score formula, grade thresholds, and edge cases — channels gaming the format-clarity signal by deleting non-conforming uploads, channels whose first-5 sum is dominated by a single viral upload, channels with hidden subscriber counts — live on the methodology page. The how to find small YouTube channels companion guide walks through how to apply these signals manually.

The public-data signature of a pre-blow-up channel

Read the signature in a specific order, because each field constrains the meaning of the next. The order is: channel age, first-5 sum, view velocity, format clarity, upload cadence consistency. Researchers who start with subscriber count or with the most recent viral upload usually misread the signature, especially inside the under-30-day window where the signal lives in the distribution rather than in any single upload.

Channel age, weighted toward the under-30-day slice. Inside the under-30-day window, subscriber accumulation has barely started — most channels still have subscriber counts low enough that the public Data API's three-significant-figure rounding makes the number nearly uninformative. The recommender is doing essentially all of the lift, which is what makes the cohort comparison clean at this stage. Past 30 days the subscriber base starts contributing to early-window watch time, and the read becomes noisier.

First-5 sum, distribution-weighted. Sum view counts on the first five public uploads. A pre-blow-up signature requires the sum to clear 10,000, and the distribution matters as much as the total. Five uploads sharing 50,000 evenly is a different signal than one upload at 48,000 and four at 500 each — the first is a working format the recommender is consistently lifting, the second is a lucky video on an otherwise unremarkable channel.

Views per day, against the cluster median. Divide aggregate channel view count by channel age in days. A pre-blow-up signature requires the channel to clear 1,000 views per day. The number to compare against is the cluster median (same age, same format), not an absolute threshold — a 1,500-views-per-day channel is informative against a 200-views-per-day cluster median and unremarkable against a 5,000-views-per-day one.

Format clarity, holding across the early uploads. Compute the Shorts ratio (videos under 60 seconds divided by total video count) or read the duration distribution directly. A pre-blow-up channel is usually format-consistent: 80%+ Shorts or 80%+ long-form, not a mix. The recommender treats the Shorts feed and the Browse/Suggested surfaces as separately ranked surfaces inside YouTube's own product (YouTube Official Blog: Shorts and long-form on YouTube), and a format-consistent channel teaches the recommender a cleaner audience profile inside its first 30 days.

Upload cadence consistency. Count uploads against age and check the spacing. A 21-day-old channel with 14 uploads spaced roughly two days apart is teaching the recommender a cadence. The same channel with ten uploads in the first three days and four in the last eighteen is teaching something different (launch burst followed by silence) and produces a less reliable pre-blow-up signature. Extremely high cadence (more than two uploads per day) sometimes triggers YouTube's mass-production heuristics and is worth treating as a flag.

A worked-shape example, kept generic: a channel created 13 days ago, 7 Shorts uploads under 50 seconds, AI-storytelling cluster. Aggregate views 195,000. View velocity 15,000 per day. First-5 sum 38,000, evenly distributed. Shorts ratio 1.0. Cadence every two days. This signature clears all three gates with room to spare, earns both bonuses, and places the channel several multiples above its same-age cluster median. Every number is verifiable from the channel's own YouTube page in under two minutes — descriptive of the current state, not predictive of where the channel will be in nine months.

Why the under-30-day window matters more than any other

The gap between the under-30-day window and every later window collapses fast. A 14-day-old channel inside its pre-blow-up window is a fundamentally different research object than the same channel at 60 days old, at 180 days old, or at 12 months old. The differences are not about size — they are about which inputs are driving the lift, and which inputs a new entrant can replicate.

Inside the under-30-day window, the lift is coming almost entirely from format-fit rather than from accumulated audience. The channel does not yet have a subscriber base large enough to drive early-window watch time on every upload. The recommender is reading the format (production mode, length, cadence, visual template, hook style) and matching it to an audience without any audience-momentum shortcut. A new entrant studying the channel at this stage is studying a system whose performance depends entirely on the variables they can replicate.

By day 45 the read has shifted. The channel now has a small but meaningful subscriber base — a few thousand subscribers who get notified on most uploads and drive early-window watch time the recommender reads as positive engagement signal. The lift is partly downstream of the audience the channel has accumulated, and the format read is mixed with audience-momentum read in a way that gets harder to disentangle the longer the channel runs. By day 90 the subscriber base is a meaningful contributor on every upload; new uploads benefit from audience momentum that has nothing to do with the format being currently warm at the small-channel layer. By month 9 the channel may be at 400,000 subscribers, and the lift on each upload is driven by a mix of recommender format-matching, accumulated audience, search traffic, external referrals, and cross-channel discovery — several layers downstream of inputs that do not generalize.

The implication: the under-30-day window is the slice during which the channel is most informative as research evidence about which formats the recommender is currently lifting at the small-channel layer. Every later window contaminates the read with audience-momentum data. The reason the paid workflow surface is built around the live cohort under 30 days old is structural — that is the slice where the public-data signature most cleanly answers the question a new entrant actually has.

How NicheBreakout's live cohort surfaces these channels

NicheBreakout's live library is, by construction, a list of channels currently inside their under-30-day pre-blow-up window plus the under-45-day continuation slice. The scan runs daily, hits the public Data API v3 endpoints, applies the three hard gates, computes the score with the two bonuses, and surfaces channels that have crossed the thresholds inside the current scan cycle. The under-30-day slice — the version this page is built around — is the freshest layer.

The product split is deliberate. The Friday digest is the free weekly-cadence surface: three current sub-30-day channels every Friday with format fingerprints and outbound YouTube links, no account required. The full live cohort under 30 days old is the paid workflow surface because under-30-day reads are the actionable layer for a researcher trying to read the format before it saturates. The matured public archive, opening in summer 2026, is the second free surface; it will preserve the detection-time public fields of channels that were flagged during their pre-blow-up window so a reader can see what the under-30-day signature looked like at the moment of detection rather than only the channel's current state.

Every surface shares the same evidence standard. Every channel card outbound-links to YouTube so the public metadata is verifiable on the source in one click. No card includes an AI-generated narrative beyond the public-data observation. No surface claims access to private metrics — watch time, retention curves, click-through rate, swipe-away rate, traffic-source breakdowns, and revenue all live behind the YouTube Analytics API and are not third-party-readable. The boundary is on the methodology page; the most profitable YouTube niches sister page covers the public-vs-private boundary on revenue specifically.

The paid-library framing is the honest economic version of the page. A free annual "channels before they blow up" listicle cannot deliver pre-blow-up channels at the layer they are most useful — by the time the article ranks, the channels inside it have already exited the under-30-day window. Real-time scanning is the only sourcing model that delivers the cohort at the layer the phrase actually refers to, and it has a cost (API quota, ingestion infrastructure, daily compute). Charging for the live cohort aligns the surface with where the value is concentrated; keeping the digest free aligns the introductory surface with researchers who want to see what the product produces before paying.

What we deliberately don't claim

NicheBreakout does not predict which specific sub-30-day channel will reach a million subscribers, will pass any specific subscriber threshold, or will go viral. The product is observational, not predictive. Predictive claims require inputs the public YouTube Data API v3 does not expose — operator posting cadence over the next sixty days, title and thumbnail experiments, the recommender's evolving format weighting, audience-saturation dynamics, copyright and policy events. The official YouTube Data API v3 reference (YouTube Data API v3 reference) is the canonical list of what is publicly exposed; nothing on that list supports a predictive claim about a specific future trajectory.

NicheBreakout does not publish a causal explanation of why any specific pre-blow-up channel is currently in its window. Watch time, audience retention curves, click-through rate, swipe-away rate, and traffic-source breakdowns all live behind the YouTube Analytics API, which Google reserves for channel owners and authenticated content partners (YouTube Data API: channels.list). Any "pre-blow-up channel finder" content claiming to explain the recommender's reasoning is either reading authenticated owner data or making the explanation up.

NicheBreakout also does not project subscriber-growth trajectories, does not rank channels by predicted future subscriber count, does not run "next big creator" forecasts, and does not publish per-channel income, RPM, CPM, or sponsorship-rate predictions. Revenue data lives behind the YouTube Analytics API and YouTube AdSense, both authenticated channel-owner-only. The most profitable YouTube niches sister page covers the structural reason. The discipline this boundary creates is the product itself: pre-blow-up detection inside the public-data layer is defensible because every claim on every channel card can be checked against YouTube directly, while predictive claims that would require non-public inputs are out of scope.

Common mistakes when shopping for pre-blow-up channels

Five mistakes recur when researchers try to find channels before they blow up using open-web sources. Each routes the reader toward a different object than the phrase actually describes.

Chasing subscriber count as the early signal. Subscriber count is rounded to three significant figures in the public Data API and is a lagging indicator. A 14-day-old channel with 4,000 subscribers and 14,600 views per day is a stronger pre-blow-up signature than a 14-day-old channel with 8,000 subscribers and 400 views per day, despite the higher subscriber number on the second channel. By the time the subscriber count has visibly jumped, the underlying view-velocity inflection happened earlier. Weight view velocity and first-5 sum above subscriber count.

Copying the topic instead of the format. Topics saturate in weeks; the recommender has already started rotating the topic out by the time a new entrant publishes their version. The format (production mode, length, cadence, visual template, hook style) generalizes across topics for months and is the variable a new entrant can actually replicate. A new entrant running a sibling topic inside the same format usually outperforms an entrant running the exact same topic. The faceless YouTube niches sister pillar covers the format-vs-topic distinction in production-mode detail.

Ignoring the channel-age signal. The pre-blow-up label means something fundamentally different on a 14-day-old channel than on a 90-day-old one. The 90-day-old channel has already accumulated some subscriber base; the public-data signature is mixed with audience-momentum read. The 14-day-old channel has the recommender doing essentially all of the lift. The YouTube niche validation checklist operationalizes the age-cohort discipline into a workflow new creators can apply directly.

Treating one viral upload on a new channel as the signal. A 14-day-old channel with one upload at 800,000 views and four uploads at 600 each is a one-viral-video channel inside its sub-30-day window — the signature is concentrated rather than distributed. The pre-blow-up label belongs to channels whose first-5 sum is distributed evenly enough that the recommender is reading the format rather than one specific video. The concentration ratio diagnostic — divide the highest-view upload by total channel views — separates the cases. Under 30% concentration is a distributed pre-blow-up signature; over 60% is one viral upload on an otherwise quiet channel.

Skipping the cohort comparison. A channel-level metric in isolation is not a pre-blow-up signal; it becomes one only relative to a peer cohort of similar-age, similar-format channels. A 5,000-views-per-day channel is a pre-blow-up signature if the cluster median is 500 and unremarkable if the cluster median is 12,000. Bucket by format cluster before computing the pre-blow-up multiple — the bucketing NicheBreakout's methodology does internally so the read stays apples-to-apples.

The clusters currently producing the most pre-blow-up channels in our scans

Pre-blow-up channels in our 2026 scans cluster into a handful of format-topic intersections that keep producing sub-30-day candidates. Read the list as observation, not as a ranking — there is no "best pre-blow-up niche," only formats whose peer-cohort comparison is currently producing channels at the under-30-day layer. Across the channels currently inside our live 30-day window — a subset of the broader 2,082-channel scan — the densest niche clusters meeting our sample-size threshold are:

The Shorts-first vs long-form split inside those top clusters looks like this in our dataset:

| Niche | Shorts-first % | Long-form-first % | Mixed % | Sample |

|---|---|---|---|---|

| Celebrity Trending News & Viral Moments | 100% | 0% | 0% | 10 |

Format clustering is the right unit of analysis because cohort medians are most meaningful inside a format cluster. A TTS-narration history-shorts channel compared against other TTS-narration history-shorts channels produces a clean cluster-multiple read; the same channel compared against all history channels produces a noisy comparison dominated by format-fit variance. Two structural patterns hold across the snapshots: short-form clusters consistently outnumber long-form clusters at the pre-blow-up layer because the Shorts feed gives newer channels more recommender exposure per upload; and faceless production modes (TTS narration, AI imagery, stock footage with text overlay) outnumber face-on-camera modes at the same layer because lower production-cost-per-upload lets the channel hit the cadence the recommender reads as positive signal during its first 30 days.

Separately from the live snapshot above, NicheBreakout maintains dedicated programmatic topic pages for five recurring format clusters that produce pre-blow-up channels repeatedly in our scans:

- AI story channels: TTS narration plus AI imagery, recurring story templates, Shorts-first publishing.

- Reddit story channels: TTS reading r/AmITheAsshole, r/ProRevenge, r/MaliciousCompliance threads with stock visuals.

- History shorts channels: fact-stacking with cinematic visuals, vertical and horizontal variants.

- Faceless storytelling channels: broader narrative format spanning fiction and non-fiction.

- Quiz channels: interactive Q&A format, often Shorts-first with text overlays.

The parent YouTube outlier finder pillar covers the cross-cluster methodology, and the faceless YouTube niches sister pillar covers the production-mode angle most pre-blow-up-producing clusters fall under.

FAQ

How do you find YouTube channels before they blow up?

By running a deterministic filter over public YouTube Data API v3 metadata on the youngest slice of the channel population. The earliest-window read uses three hard gates — channel age under 45 days, first-five-upload sum views ≥ 10,000, lifetime views per day ≥ 1,000 — restricted to the sub-30-day slice where the public-data signature is showing but the recommender has not yet fully amplified the channel against the broader audience. The label is descriptive of the current signature, not predictive of which sub-30-day channels will keep scaling. The full methodology is on the methodology page; the manual workflow is on how to find small YouTube channels.

Can you predict which YouTube channel will go viral?

No, and any tool claiming to is selling a number it does not have. The variables that determine whether a sub-30-day channel keeps scaling are mostly downstream of inputs the public YouTube Data API does not expose: posting cadence over the next sixty days, title and thumbnail experiments, the recommender's evolving format weighting, audience saturation, copyright or policy events. What public data does support is the present-tense observation that a specific sub-30-day channel currently shows the public-data signature consistent with channels that have gone on to break out. The observation is defensible; the prediction is not, and the page deliberately stays inside the defensible half.

What does "before they blow up" actually mean?

It means the channel's public-data signature is showing the early-traction pattern but the channel has not yet accumulated the audience momentum that makes it obvious to the broader market. In NicheBreakout's terms, the cleanest reading of the phrase is the under-30-day slice of the live library — channels currently less than 30 days from creation that have already cleared the three hard gates. The recommender is doing the lifting; the subscriber base is still small enough that the cohort comparison is clean; the channel has not yet been priced in by the broader audience layer (channels reaching it through search, through external links, through manual subscription). The phrase is descriptive of the window, not predictive of the outcome.

Is there a free way to find pre-blow-up channels?

Yes. The Friday digest sends three current sub-30-day channels every week with format fingerprints and outbound YouTube links — free, present-tense. The full live cohort under 30 days old is the paid workflow surface because the under-30-day window is the actionable read for researchers trying to study the format before it saturates. The matured public archive opens as a second free surface in summer 2026 once the first detected cohort ages past the 60-day post-detection mark; that archive will preserve the detection-time public fields so a reader can see what the signature looked like inside the pre-blow-up window.

How accurate is pre-blow-up detection?

Accuracy is the wrong frame because the page is not making predictive claims. The detection is deterministic — every flagged channel currently meets the three hard gates and every channel card outbound-links to YouTube so the public metrics can be verified on the source in under two minutes. The downstream question of how many flagged sub-30-day channels actually go on to break out at scale is a different question, and one the page deliberately does not promise to answer. A meaningful share regress to the cohort median or stop publishing entirely within sixty days; another meaningful share keep scaling. What the public-data signature can tell you is which sub-30-day channels currently show the pattern consistent with future scaling — not which ones will get there.

What's the youngest channel you've found in the cohort?

The early-traction velocity bonus tier surfaces channels at the extreme end — age ≤ 14 days with first-5 sum ≥ 50,000 or views/day ≥ 5,000. The youngest channels that clear all three hard gates plus the velocity bonus are typically between 5 and 14 days from creation with 4-to-12 uploads. Channels younger than 5 days rarely have enough uploads to clear the first-5 sum gate cleanly, which is by design — three uploads sharing 10,000 views is a different signal than five uploads sharing 10,000, and the methodology weights the larger sample.

Can I subscribe to alerts for new channels?

The Friday digest is the current weekly-cadence alert surface — three current sub-30-day channels every Friday, free. A higher-frequency alert tier is on the product roadmap and will surface channels at the moment they cross the three hard gates rather than at the weekly digest cadence; that tier will sit alongside the paid library and is not yet available. The fastest current way to see new channels in the pre-blow-up window is the live library itself, which refreshes daily and is filterable to the sub-30-day slice.

How is this different from /breakout-youtube-channels?

Breakout YouTube channels defines the product-language object — channels already inside their breakout window (under 45 days, cleared gates, currently outpacing cohort). This page is the earliest-window slice of the same object: the sub-30-day sub-cohort where the public-data signature is showing but the recommender has not yet fully amplified the channel against the broader audience. The breakout page is the credibility anchor; this page is the timing anchor — the slice where the observation is most informative for a researcher trying to read the format before it saturates.

Methodology / About this analysis

NicheBreakout's research relies entirely on YouTube Data API v3 public fields: channel age (from creation date), subscriber count (rounded to three significant figures), video count, view count, video metadata, video publish dates, video duration, and recent video performance. The pre-blow-up observations on this page are derived from the same scan that powers the main live library — no separate dataset, no authenticated YouTube Analytics API access, no inferred private metrics, no AI-generated narratives explaining why specific channels are inside their pre-blow-up window, and no predictive claims about which specific channels will go on to break out. Cohort medians are computed inside format clusters (Shorts-first vs long-form, faceless production mode vs face-on-camera, primary niche tag) so the under-30-day signature read stays apples-to-apples.

Original-research artifacts in this article: the under-30-day window argument for where the pre-blow-up signature is cleanest, the prediction-vs-observation distinction, the deterministic flagging methodology, the five-step public-data signature read, the audience-momentum-contamination argument for why later windows degrade the read, and the revealed channel cards above the fold. The pre-blow-up-producing format clusters discussed reflect what we have scanned, not the entirety of YouTube. Author: Nicholas Major (Founder, NicheBreakout · Software engineer since 2011). Article last revised 2026-05-12.

Live scan freshness:

Related research

- YouTube outlier finder: the parent pillar covering channel-level vs video-level outlier detection and the statistical case behind the pre-blow-up label.

- Breakout YouTube channels: sibling cluster page covering the product-language framing of channels already inside their breakout window.

- Up-and-coming YouTube channels: sibling cluster page covering the rhetorical framing of channels under 90 days old with traction.

- New YouTube channels growing fast: sibling cluster page covering the per-day velocity framing on the same object.

- Small YouTube channels blowing up: sibling cluster page covering the channel-level inflection-moment framing.

- How to find small YouTube channels: the lateral manual-workflow guide for researchers building a private pre-blow-up workflow.

- YouTube channel research: sister pillar covering the broader channel-discovery category that pre-blow-up detection sits inside.

- YouTube niche finder: sister pillar covering niche research across breakout-channel discovery and the broader niche-finder category.

- Faceless YouTube niches: sister pillar covering the production-mode angle that dominates most pre-blow-up-producing clusters.

- YouTube Shorts trends: sister pillar covering the Shorts-first publishing angle that dominates the sub-30-day layer.

- Most profitable YouTube niches: companion pillar covering the public-data-vs-private-data boundary on revenue and monetization claims.

- AI story channels: programmatic topic page tracking the AI-storytelling pre-blow-up cluster.

- Reddit story channels: programmatic topic page tracking the Reddit-narration pre-blow-up cluster.

- History shorts channels: programmatic topic page tracking the history-shorts pre-blow-up cluster.

- Faceless storytelling channels: programmatic topic page tracking the broader storytelling cluster.

- Quiz channels: programmatic topic page tracking the quiz/trivia cluster.

The Friday digest sends three current sub-30-day YouTube channels every week with format fingerprints and outbound YouTube links — free, present-tense. The full live cohort under 30 days old is the paid workflow surface; the matured public archive opens as a second free surface in summer 2026. See pricing for the current tier; subscribe to the digest free.

End of cluster page

See the YouTube channels currently inside their pre-blow-up window

Every channel card outbound-links to YouTube so you can audit the public metadata yourself. The full live cohort under 30 days old is the paid workflow; the Friday digest is free.