/ Cluster · YouTube automation niches

YouTube automation niches: where small-channel breakouts still exist after the AI flood

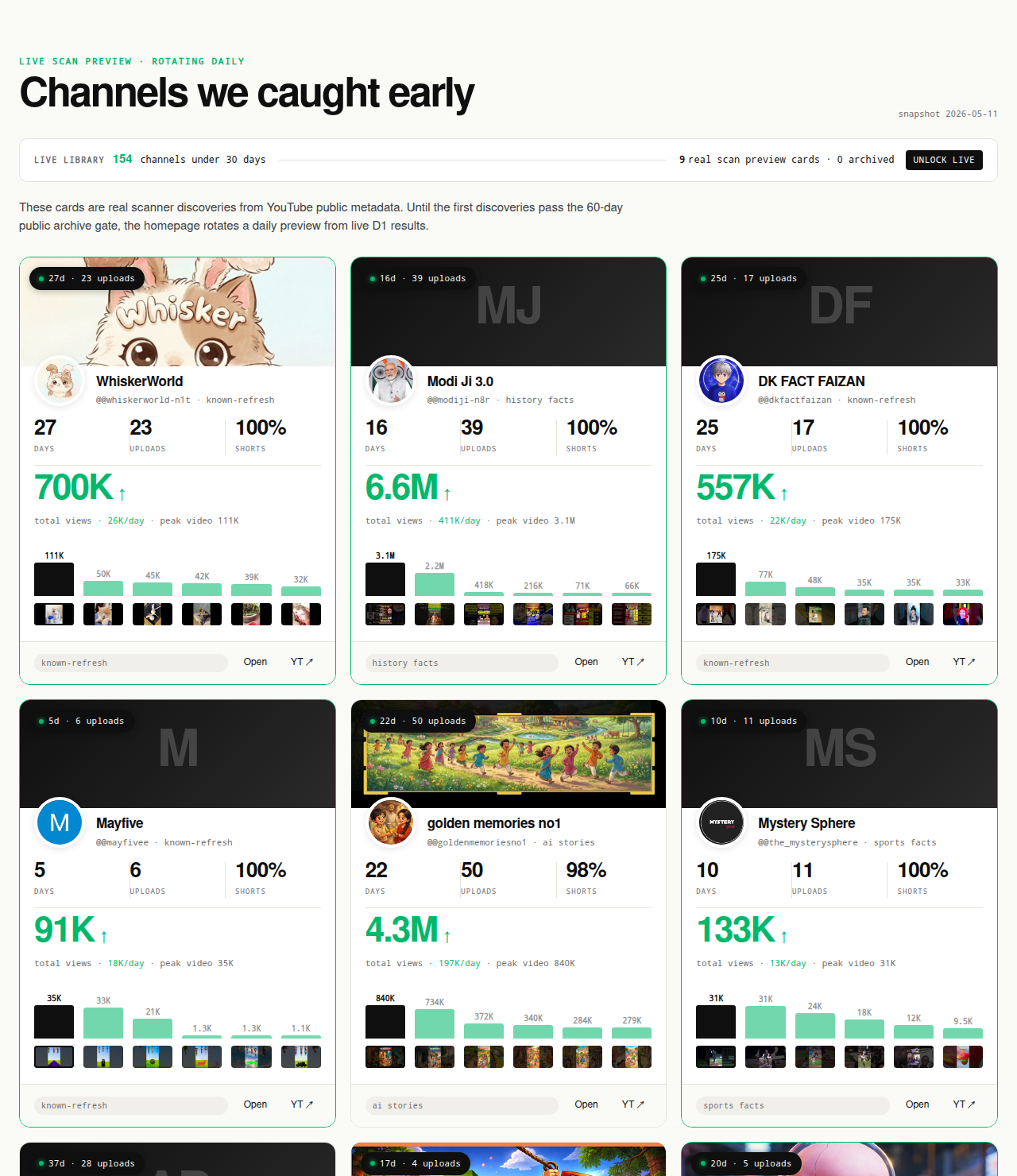

YouTube automation niches are a contested category. To some operators the term means an agency-run multi-channel business with outsourced editing and shared production pipelines; to others it means an AI-tooled solo production stack on one channel. Both interpretations share a structural problem: automation lowers cost-to-replicate, which drags saturation timelines forward. A working automation niche today is one where small channels are still breaking out despite the post-2024 wave of new automation operators, under public Data API v3 signals: channel age, first-5 sum views, lifetime views per day, format clarity. NicheBreakout's research base is 2,082 channels scanned to date — public metadata only, no RPM claims, no AdSense inference.

The Friday digest reveals three current breakout channels every week for free — automation-style and creator-led production both. The live 30-day window — dozens of channels under 30 days old right now — is the paid workflow surface; the matured public archive opens as a second free surface in summer 2026 once the first cohort ages out.

Open the live library →

What "YouTube automation" actually means in 2026

"YouTube automation" is a contested label in 2026. The same phrase is used for two operator models that overlap but are not the same, and the niche advice that works for one model breaks for the other. Both interpretations need to be on the page before any specific niche recommendation makes sense, because the operator's actual model determines which niche-type survives.

Agency-run YouTube automation is the multi-channel business model. One operator or a small team runs several channels in parallel, each in its own niche, sharing a back-office workflow: scriptwriting templates, narration assets, editing pipelines, thumbnail systems, scheduled-upload calendars. The differentiator at the operator level is portfolio breadth and production throughput; the differentiator at each individual channel level is the format-topic combination and the editorial taste applied to it. The Cody-Sperber-era "YouTube automation business" courses popularized this framing — investor-style operators treating channels as cash-flowing assets to be built, optimized, and sometimes sold. The model survives in 2026 but with much tighter constraints than the 2020-2022 wave: YouTube's 2024 Partner Program update around inauthentic content explicitly targets template-at-scale operations that recycle other creators' material.

AI-tooled YouTube automation is the production-stack choice for a single channel. One operator uses TTS narration, LLM-assisted scripting, AI imagery, and scheduled publishing to reduce per-upload time from a creator's working day to a couple of hours. The channel is still one channel; the "automation" is in the per-upload pipeline, not in the portfolio. This model has grown rapidly post-2023 because the production-cost reductions are real and the tooling is widely available. It is the more common interpretation of the term among working faceless creators, while the agency-run interpretation dominates business-school-style automation courses.

The two models overlap: an agency-run automation business almost always uses AI tooling on each of its channels, and an AI-tooled solo operator sometimes expands into a small portfolio of channels once the first channel works. But the operator's primary model determines which niches make sense. The agency-run model rewards format replicability — the operator needs to run the same production rig across multiple channels, which favors niches where the format is templatable and the topic-rotation is easy. The AI-tooled solo model rewards editorial iteration — the operator can spend the production-time savings from AI tooling on thumbnail tests, hook rewrites, and format experiments inside one channel, which favors niches where editorial taste differentiates rather than throughput.

This page covers both. The niche analysis later in the article notes which clusters fit the agency-run profile, which fit the AI-tooled solo profile, and which fit both. Distinguishing the two interpretations is also why the page is separate from the parent faceless YouTube niches pillar. The parent pillar covers the production-style question (what does faceless mean, which faceless production modes are working). This cluster covers the operator-workflow question (which niches survive an automated pipeline given that automation lowers cost-to-replicate).

Why automation niches need a stricter breakout check than non-automation niches

Automation niches saturate faster than non-automation niches. The mechanism is direct and falsifiable. When a face-on-camera creator with a strong personal style demonstrates a working format, copying that channel requires another creator with comparable presence, comparable editorial taste, and comparable production effort per upload — a slow replication function. When an automation operator demonstrates a working format-topic combination with TTS narration, AI imagery, and a templatized edit, copying the format requires another operator with a similar production rig and a willingness to spend a week setting it up. Cost-to-replicate is lower by an order of magnitude, and the time-to-replicate compresses from months to weeks.

The compounding effect is that fifty operators can copy a working automation format inside ninety days, while ninety days is also roughly the window in which the original operator's recommender lift might still be saturating. The result is a market structure where the first-mover advantage in an automation niche is shorter, the saturation curve is steeper, and the breakout window inside any given format-topic intersection is narrower. None of these are arguments against running an automation channel. They are arguments for using a stricter "is anyone currently breaking out at this exact intersection right now" check than the equivalent check for a non-automation niche.

What "stricter" means in practice: the channel-age signal carries more weight, the freshness of the breakout cohort matters more, and the operator's window for validating a niche before committing production capital is shorter. A non-automation niche where breakout channels surface every six months is workable; an automation niche where breakout channels surfaced eighteen months ago and have not surfaced since is probably saturated. The methodology section below operationalizes this into a check: for automation-fit niches, the relevant signal is breakout density inside the most recent scan windows, not historical breakout density across the last two years.

The cluster-mix snapshot below shows the densest niches across our most recent scans. For automation-considering operators, the right reading is to look at which of these clusters contain templatable formats, then drill down to the specific format-topic intersection inside each cluster where current breakouts are concentrated:

The presence of automation-friendly clusters near the top of that list is consistent with the saturation argument above, not proof of it — it tells you which clusters are currently producing breakouts, and the operator's job is to verify whether the specific format-topic intersection they are considering is part of the active cohort or part of the saturated tail. The cluster ranking shifts week to week as new formats surface and older ones saturate. Read it as a current snapshot, not a market-wide claim, and read it more often if the operator is in an automation niche.

The deterministic filter for a working automation niche

NicheBreakout applies the same three hard public-metadata gates to automation-style and creator-led channels. The full methodology lives on the methodology page; the version below is the abbreviated readout, with notes on where the automation-specific weighting differs.

Channel age

detected within 45 days of channel creationFirst-5 upload views

combined views across the first five public uploads ≥ 10,000Views per day

lifetime channel views ÷ channel age ≥ 1,000Format clarity (bonus)

score weights channels with a clear Shorts-first or long-form-first ratio above mixed-format channelsEarly-traction velocity (bonus)

score boost when channel age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000

The three gates each isolate a different piece of the early-traction picture, and each of them matters more for automation niches than for the equivalent non-automation niche.

Channel age ≤ 45 days matters more for automation because the saturation timeline is shorter. A breakout channel detected at 200 days into its life in a face-on-camera niche tells you the format-audience match is still warm; the same detection in an automation niche tells you the channel got in before the copy wave and may not be replicable now. The 45-day window is the same for both, but the inferential weight of "channel age > 45 days and currently breaking out" is asymmetric — strong signal for non-automation, weaker signal for automation. The methodology does not weight this asymmetry numerically; the operator does, by reading recent-cohort data when the niche is automation-eligible.

First-5 sum views ≥ 10,000 filters out single-video flukes. For automation channels the threshold matters because automation operators often publish at high cadence — five uploads in twenty days is common — which means the first-5 sum is reached on a tight timeline. A channel that has not cleared 10,000 across its first five uploads inside an automation niche is signaling poor format-fit, poor thumbnail iteration, or post-saturation entry timing, in roughly that order of likelihood. The gate is the same number for both production modes; the diagnostic value is higher for automation because the publishing cadence makes the signal land sooner.

Lifetime views per day ≥ 1,000 is the cleanest velocity check available from public metadata. For automation channels the velocity floor matters because automation operators are running a production cost curve — TTS spend per upload, editing spend per upload, asset licensing per upload — that needs a minimum view velocity to recover at any monetization model. A channel below 1,000 views/day across its first 45 days is probably not breaking the per-upload cost floor at typical ad-share rates, which means the channel is either subsidized by the operator's other income or about to stall. The methodology does not estimate revenue (revenue is Analytics-only and not third-party-readable), but the velocity floor is a defensible proxy for "this format is reaching its intended audience."

The two score bonuses sharpen the ranking inside the filtered set. Format clarity rewards channels with a consistent Shorts-first or long-form-first ratio over format-mixed channels — relevant for automation because the templatized production rig usually favors one format and a format-mixed automation channel typically signals an operator who has not committed to either Shorts-feed or Browse-feed mechanics. Early-traction velocity (age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000) catches the fastest-moving channels at the top of any niche cluster — the ones where format-fit is unambiguous and the operator's window of replicability before the copy wave arrives is largest.

Average first-five-video views across populated grade tiers in our discoveries cohort looks like this (grades with no current members are suppressed until they fill in):

The automation niches with current small-channel breakouts in our scans

This section does not rank automation niches by claimed RPM. As the closing FAQ and the boundary section below cover in detail, per-niche RPM is not third-party-readable, and every listicle ranking automation niches by claimed earnings is extrapolating from anecdote. What we can publish is the cluster-density observation: which automation-compatible niche clusters are currently producing small-channel breakouts under the methodology described above, in no particular order, with internal links to the dedicated programmatic topic pages where the actual breakout examples live.

AI storytelling channels are a high-volume cluster in our 2026 scans, and the cluster that most directly fits both interpretations of automation. The format — TTS narration plus AI-generated imagery, recurring story templates, Shorts-first publishing — is templatable enough for the agency-run model and editorial enough at the sub-format level for the AI-tooled solo model. The parent topic is saturated; current breakouts live at specific sub-intersections inside it (AI-generated horror anthologies, AI-generated medieval-history narratives, AI-generated true-crime-adjacent storytelling). The AI story channels programmatic page tracks the cluster with the same outbound-link verification as the main library. Operator-fit note: this is the most copy-dense cluster in our scans; the freshness of the breakout cohort matters more here than in any other niche on the page.

Reddit narration channels read TTS over r/AmITheAsshole, r/ProRevenge, r/MaliciousCompliance, and adjacent story threads with stock visuals or simple character overlay. The format is templatable enough for the agency-run model, but it is the cluster that suffered most from the 2024 Partner Program update on mass-produced content — operators who scaled to multiple channels reading raw threads with no editorial framing hit demonetization across the portfolio. The Reddit story channels programmatic page covers the cluster. Operator-fit note: the channels still breaking out in this cluster are adding character voicing, editorial selection across multiple threads, or original commentary over the raw thread — the editorial layer is the differentiator, not the production rig.

History shorts channels stack fact-density inside 45-to-75-second vertical videos with cinematic visuals (archival, AI-generated, or hybrid). The format is highly templatable and well-suited to either operator model: history is an effectively bottomless topic pool, the visuals are licensable or generatable, and the TTS-friendly script structure is consistent. The history shorts channels programmatic page indexes the cluster. Operator-fit note: format-mixed history channels (vertical and horizontal on the same channel) underperform format-consistent ones in our scans; the operator's first production decision should be Shorts-first or long-form-first, not a vague "history channel" commit.

Faceless storytelling channels span the broader narrative cluster — fiction and non-fiction narration, faceless documentaries, faceless explainer formats. Breakout density skews toward voiceover-plus-B-roll production over pure TTS, which makes this cluster better fit for the AI-tooled solo operator than for the agency-run portfolio operator. Editorial voice in the script is the differentiator; template channels saturate quickly. The faceless storytelling channels programmatic page covers the cluster. Operator-fit note: this is the cluster where editorial taste matters most; an agency-run operator without a strong editorial process per channel will struggle here.

Quiz and trivia channels use interactive Q&A formats, often Shorts-first with text overlays and a count-down timer. Production cost per upload is the lowest of any cluster on this page, which makes it the highest-throughput option for the agency-run model and the easiest entry point for a new AI-tooled solo operator. The quiz channels programmatic page tracks the cluster. Operator-fit note: visual-template saturation is real — operators copying the same overlay style at high volume across multiple channels start triggering YouTube's mass-production heuristics; question selection and difficulty calibration are the editorial differentiators that hold up.

Other clusters surface periodically in our scans without dedicated programmatic pages. Finance explainer channels (faceless screen-recorded chart breakdowns, faceless TTS-over-graphs) appear in scan windows where macroeconomic news drives audience attention; the cluster is the most heavily targeted by recycled "highest-RPM" listicle copy, which has driven operator entry without driving recommender lift. Scary-story narration channels periodically surface as a breakout cluster adjacent to AI storytelling; copyright collisions on narration of others' creative writing are a recurring monetization risk. List-of-X channels (top-10 vertical shorts with TTS) periodically surface with very high publish cadence; list-template saturation is the fastest of any cluster in our scans, and channels that rely entirely on the template without editorial selection saturate within months. None of these clusters is "the best automation niche." Each is a cluster where current public-data signal indicates the recommender is currently lifting new entrants at specific format-topic intersections.

Why format-fit matters more than topic in automation

For automation operators specifically, the topic is the negotiable part of the channel decision; the format is the load-bearing part. YouTube's recommender (the Browse feed, Suggested videos, the Shorts feed, and Search ranking) reads channel-level format consistency as a signal long before it has enough watch-time data to read topical strength. The recommender's evaluation surface for a new channel is roughly: which audience profile does this channel's first batch of uploads teach me, and how confident can I be in that profile from the first few hundred impressions. A format-consistent channel teaches a clean profile. A format-mixed channel teaches an ambiguous one.

This matters more for automation because automation production is templatable but only if the format is locked. The cost advantage of an automated production rig comes from running the same rig across many uploads; the rig has to produce one type of upload to capture that cost advantage. An operator who runs an AI-storytelling rig on Monday, a Reddit-narration rig on Wednesday, and a history-shorts rig on Friday is paying full per-upload production cost three different ways and teaching the recommender three different audience profiles for one channel. The economics and the recommender mechanics agree: pick one format, lock the rig, and rotate topics inside it.

The format-clarity bonus in NicheBreakout's score formula is the methodology's way of putting that pattern into a number. A channel with a Shorts ratio above 0.8 or below 0.2 gets a score bump over a 0.4-to-0.6 mixed channel; the bonus is small individually but compounds with the early-traction velocity bonus to push format-consistent breakout channels to the top of any cluster ranking. Inspect the live library by cluster and the Shorts-first vs long-form split inside the top automation-friendly clusters lines up like this:

| Niche | Shorts-first % | Long-form-first % | Mixed % | Sample |

|---|---|---|---|---|

| Celebrity Trending News & Viral Moments | 100% | 0% | 0% | 10 |

The corollary for an automation operator is that the first production decision — Shorts-first or long-form-first — is more consequential than the topic decision. A history-shorts rig is a different production line from a long-form-history-documentary rig, with different asset libraries, different script lengths, different TTS pacing, and different thumbnail conventions. An operator who picks "history" without picking the format ends up either rebuilding the rig mid-channel or running both formats inconsistently. Either outcome is worse than picking the format first and the topic second.

The same logic applies inside a multi-channel agency portfolio. The portfolio operator who runs three Shorts-first channels in three different topics is running one production rig three times — efficient. The portfolio operator who runs one Shorts-first channel, one long-form channel, and one mixed channel is running three production rigs — inefficient. The portfolio-economics argument and the recommender-mechanics argument point at the same operator decision: lock the format, then rotate topics inside the format. Anyone running automation channels at scale who skips that step is leaving both production cost and recommender lift on the table.

AI-content disclosure and YouTube monetization for automation channels

Two YouTube policy surfaces govern automation channels. The first is the original-content rule: YouTube's monetization policy disallows uploading content that "isn't yours" — including clips, content from social media, and songs — without significant original commentary or value-add. The canonical statement lives in the YouTube Help Center entry on original content (YouTube Help: Reused content policy), and the 2024 Partner Program update sharpened enforcement against channels that mass-produce videos with no original creative work. Automation channels with original scripts, original narration choices, and edited visuals are inside policy; automation channels reuploading other creators' videos with a TTS voiceover added are not.

The second is the AI-content disclosure rule. YouTube's Help Center requires creators to mark "altered or synthetic content" in Creator Studio when a video meaningfully alters reality (YouTube Help: Disclosing use of altered or synthetic content). The rule is narrower than the panic about it suggests. Generic synthetic narration in a clearly fictional story does not trigger the disclosure. AI imagery in a fictional or speculative story does not trigger the disclosure. What does trigger the rule: AI imagery depicting a real person, AI voice cloning of an identifiable person, AI imagery of real events presented as documentary footage, and AI-generated content that could be mistaken for authentic depictions of reality.

For an automation operator the practical compliance check is two-step. Per upload: does this video include any AI-generated element that could plausibly be mistaken for a real person, place, or event? If yes, enable the disclosure toggle in Creator Studio. The disclosure produces a label under the video and has minimal effect on watch time; failing to disclose can result in content removal or monetization restrictions. The cost-benefit is unambiguous, and operators unsure whether a specific upload triggers the rule should disclose by default. Per channel: is the production pipeline producing original creative work per upload, or is the channel pattern-matching with the mass-production heuristic? The two-step compliance check costs the operator minutes per upload and removes most of the enforcement risk that has accumulated in the automation category since 2024.

Monetization eligibility is a separate question. The YouTube Partner Program thresholds (1,000 subscribers and 4,000 watch hours in 12 months, or 1,000 subscribers and 10 million Shorts views in 90 days) apply to automation-style and creator-led channels identically. The 2024 inauthentic-content enforcement adds a layer on top: a channel meeting the numeric thresholds can still be rejected from monetization if YouTube's review concludes the production is mass-produced without original work. That review applies to the channel pattern across uploads, not to any single upload — which is why a multi-channel agency operator running similar templates across the portfolio carries a portfolio-level enforcement risk, not just a per-channel one.

Operators running multi-channel automation portfolios should also know the repost rule applies at the portfolio level for some enforcement actions. A channel that fails the originality review can affect AdSense status for other channels associated with the same Google account; this is documented across YouTube's monetization-policy enforcement pages and is one of the structural risks of the agency-run model that single-channel AI-tooled operators do not carry. The mitigation is the same as the per-upload mitigation: original scripts, original narration choices, original visual editing, and per-channel editorial review at a rate the operator can actually sustain.

What we deliberately don't claim about automation profitability

NicheBreakout does not publish RPM figures, CPM estimates, revenue-per-channel claims, "highest-earning automation niche" rankings, or income projections of any kind for automation channels. Those metrics live behind the YouTube Analytics API and YouTube AdSense, which are not third-party-accessible for any channel the researcher does not own. Google's documentation on the Analytics API states the constraint directly — it "provides multiple reports that channel owners and content owners can use to retrieve YouTube Analytics data" (YouTube Analytics and Reporting APIs overview), with content-owner authentication required. A researcher who does not own the channel and is not authenticated as a content partner cannot read Analytics data for that channel, full stop.

The fields restricted to the Analytics API are precisely the fields that would actually answer "how profitable is this automation niche." Estimated revenue, estimated ad revenue, CPM, RPM, monetized playbacks, ad impressions, playback-based CPM, watch time, average view duration, audience retention, click-through rate, impressions, traffic source breakdown, audience demographics, and subscriber-vs-non-subscriber view share are all Analytics-only. Every metric that would let a third party reason about an automation channel's actual monetization quality is gated. This is not a NicheBreakout choice; it is how YouTube's product is designed.

Listicles ranking automation niches by claimed RPM are doing one of three things: presenting an estimate built from non-revenue signals (subscriber count, view count) as if it were a revenue measurement, recycling a per-niche RPM figure that was already an estimate two iterations back, or fabricating the number. Each of these fails the verification standard NicheBreakout's product surface holds itself to — every channel card outbound-links to YouTube so the public fields can be checked in one click, and a per-niche RPM claim cannot meet that standard because the underlying data is private. The boundary is structural, not editorial.

What is publishable from public data, for automation operators specifically: which niche-format intersections currently have small-channel breakouts under the methodology above, what production-mode patterns the breakouts share, what the Shorts-vs-long-form split looks like inside each cluster, and the specific channels surfacing inside each cluster. Whether any of those breakouts will translate to net revenue for a new automation operator entering the same intersection depends on the operator's production cost curve, the operator's monetization mix (ad-share alone, sponsorship, owned product, affiliate), and the operator's ability to hold the breakout window before the copy wave arrives. The niche signal is upstream of monetization but does not determine it.

Common mistakes new automation operators make

Five mistakes recur in operators entering the automation category. Cloning a winning channel's topic without cloning its format. An operator reads a case study about a successful AI-storytelling channel, starts a channel in the same topic with a different production rig — long-form documentary instead of vertical TTS shorts, for example — and is confused when the recommender does not lift the new channel. The recommender ranks at the format-topic intersection layer; copying the topic but changing the format is starting a different channel from the recommender's point of view. The corrective is to read the production mode off the winning channel — video length, publish cadence, visual style, TTS pacing, thumbnail convention — and copy that, then rotate the topic inside the format.

Scaling to ten channels in one niche before validating one. The agency-run automation playbook circulating in 2022-2023 courses recommended portfolio breadth before validation; operators followed the playbook, built portfolios of similar template channels in the same niche, and hit the 2024 mass-production enforcement collectively. The corrective is the inverse: validate one channel inside one niche to first-5 sum ≥ 10,000 and views/day ≥ 1,000 before spinning up a second channel, then validate the second channel in a different niche before spinning up a third. Portfolio breadth is multiplication on a validated rig, not a substitute for validation.

Using a TTS voice that breaks the format-clarity signal. Generic TTS voices read every line at the same energy level, which is fine for a 30-second fact short and fatal for a 12-minute narrative. Higher-end TTS engines support inflection and pacing controls; lower-end engines do not. An operator running a narrative-heavy format on a flat TTS voice is signaling, both to viewers and to the recommender, that the channel is a template channel. The corrective is to match TTS quality to format demands — pick the voice that suits the longest content the channel plans to publish before committing the rig.

Picking a niche from a listicle without checking saturation. Most automation-niche listicles recycle niche names from 18-to-24 months prior, by which point the breakout window inside the listed niches has often closed. An operator who picks a niche because it appears on a 2026 listicle is reading a citation chain that traces back to 2023-2024 data, with the date updated. The corrective is to demand current channel-level evidence inside the niche — specific small channels under 45 days old at the format-topic intersection, outbound-linked to YouTube — and discount any niche claim that does not come with current channel proof. The YouTube niche validation checklist operationalizes this into a workflow.

Treating automation as a substitute for editorial judgment. AI tooling reduces per-upload production time; it does not produce editorial taste. An operator who uses the production-time savings to publish more videos with the same editorial floor accumulates volume without accumulating quality, which is the pattern the 2024 Partner Program enforcement explicitly targeted. The corrective is to spend the production-time savings on editorial iteration — thumbnail tests, hook rewrites, format experiments, narrative-arc adjustments — rather than on pure upload volume. The cost advantage of automation is real; the way to keep that advantage past the saturation curve is to apply it to editorial differentiation, not to throughput alone.

The cluster currently surfacing the most automation-friendly breakouts in our scans

Within the broader cluster mix above, the automation-friendly subset — clusters where the production rig is templatable enough for either the agency-run or the AI-tooled solo operator model — is a narrower set. The cluster currently surfacing the most automation-compatible breakouts in our scans is reported in the live-cluster snapshot below; the mix shifts week to week as new formats surface and older ones saturate, which is why an automation operator should re-check the cluster snapshot before any production commitment rather than relying on this article's static text.

The Shorts-first vs long-form split inside the top clusters is a second diagnostic an automation operator should read alongside the cluster ranking. Automation production rigs are format-specific; the operator wants to enter a cluster where the format-fit signal lines up with the production rig the operator can actually run:

| Niche | Shorts-first % | Long-form-first % | Mixed % | Sample |

|---|---|---|---|---|

| Celebrity Trending News & Viral Moments | 100% | 0% | 0% | 10 |

Operator-fit reading of those two snapshots: clusters with a strong Shorts-first skew favor the high-cadence agency-run model (Shorts rigs scale across multiple channels cheaply); clusters with a strong long-form skew favor the AI-tooled solo operator with editorial bandwidth (long-form rigs reward editorial iteration over throughput). Mixed-format clusters are signal that the recommender is still sorting the cluster — the audience inside it has not yet collapsed onto one format, which is a window for an operator who can credibly run either format, and a hazard for an operator who tries to run both.

The cluster-density observation across our most recent scans does not endorse any specific cluster as "the best automation niche." It surfaces which clusters are currently producing breakouts at all, which is the upstream question — automation operators picking a niche where no current breakouts are surfacing are betting against the recommender's current attention. The specific channels inside each cluster, with outbound YouTube links, live on the relevant programmatic topic pages: AI story channels, Reddit story channels, history shorts channels, faceless storytelling channels, and quiz channels. The operator's verification step is to open the relevant programmatic page, scan the under-45-day cohort, and read off the specific format-topic intersections currently breaking out before committing the production rig.

FAQ

What's a YouTube automation niche?

A YouTube automation niche is a content category that an operator can run as a small business with a templatized production pipeline — typically TTS narration, AI imagery or stock visuals, scripted hooks, scheduled uploads, and outsourced editing — instead of as a creator-led personal channel. The term covers two different operator models that often get conflated. The first is an agency-run multi-channel automation business, where one operator (or a small team) runs several channels in parallel, each with its own niche, all sharing a back-office workflow. The second is a single AI-tooled solo operator who automates as much of one channel's production pipeline as possible while staying inside YouTube's monetization rules. Both are "automation" in common usage; the niches each can survive are different, because the multi-channel agency model relies more heavily on format replicability and the AI-tooled solo model relies more on editorial iteration speed.

Are YouTube automation channels profitable?

Public data cannot answer that question for any specific channel an operator does not own. Per-channel revenue, RPM, and CPM live behind the YouTube Analytics API and YouTube AdSense, both of which are channel-owner-only endpoints. A third-party researcher cannot read the AdSense report for an automation channel run by someone else, which is the report that would actually answer the profitability question. Listicles ranking automation niches by claimed RPM are extrapolating from anecdote or recycling older guesses; the per-niche RPM figures in those articles cannot be sourced to YouTube's published documentation. What public Data API v3 metadata can tell an operator: which automation-compatible niches currently have small channels breaking out under the 45-day early-traction window. Whether any individual automation channel turns a profit depends on production cost (operator's TTS spend, editing spend, asset licensing), monetization mix (ad-share alone vs. ad-share plus sponsorship vs. ad-share plus owned product), and Partner Program eligibility — none of which the niche name determines.

What's the best YouTube automation niche for beginners?

There is no single answer that survives the saturation question. The honest framing: a new automation operator should pick a niche where small channels are currently breaking out under public-data signals, where the format is templatable inside the operator's actual production capacity, and where the cost-to-replicate is high enough that fifty other operators will not have copied the format inside ninety days. That third condition is what eliminates most of the listicle-popular "beginner" niches: TTS-over-stock-footage compilation channels are easy to start precisely because the format is replicable, which is exactly why the breakout window inside them is short. Beginners are better served by picking a format-topic intersection inside a broader category where editorial selection or input-source choice is the differentiator, not the production stack — for example, a curated historical-fact format inside the history-shorts cluster rather than a generic top-10 vertical with TTS narration. The dedicated beginner-focused cluster page on the parent pillar covers this in more depth.

Is YouTube automation legal?

Yes, when the content is original. YouTube's monetization policy disallows uploading content that "isn't yours" — clips, content from social media, or songs — without significant original commentary or value-add (see the YouTube Help Center entry on original content for the canonical statement). An automation channel that writes its own scripts, records or generates its own narration over those scripts, edits its own visuals, and publishes the result is inside policy. An automation channel that re-uploads other creators' videos with a TTS voiceover added is not, and was the target of the 2024 Partner Program update around "inauthentic content." The legality question and the monetization question are different — non-original content can stay on YouTube but get demonetized, which is the more common outcome than removal. Operators running multi-channel automation businesses need to think about both surfaces: every channel needs original work per upload, and every channel needs to keep that standard as the operator scales.

Do YouTube automation channels get monetized?

Yes, if they meet the Partner Program eligibility thresholds (1,000 subscribers and 4,000 watch hours in 12 months, or 1,000 subscribers and 10 million Shorts views in 90 days) and the content is original. The 2024 Partner Program update tightened enforcement against mass-produced content — TTS over reposted footage, AI-generated channels that recycle other creators' material, template channels published at scale by the same operator with no original creative work. Automation channels with original scripts, original narration choices, and edited visuals are reviewed on the same monetization criteria as any other channel; automation is not a disqualifier, mass-production-without-original-work is. The disclosure rule for AI-generated content adds a second compliance layer: AI imagery depicting real people, AI voice cloning of identifiable people, and AI-generated content that could be mistaken for authentic depictions require the Creator Studio "altered or synthetic content" disclosure toggle. Generic synthetic narration in a clearly fictional story does not.

Can I run multiple YouTube automation channels?

Yes, and that is the core of the multi-channel agency model. YouTube's terms allow a single Google account to operate multiple channels; nothing in policy bars one operator from running five or fifteen automation channels in parallel. The operating constraint is editorial capacity, not platform rules. An operator who can edit, script-review, and quality-control five channels at the standard required to clear the 2024 inauthentic-content enforcement is fine. An operator who tries to run ten template channels with identical production rigs and recycled visual styles is the disqualifying case — the mass-production pattern triggers monetization review across the operator's whole portfolio, not just one channel. A practical heuristic: validate one channel inside one niche to first-5-sum ≥ 10,000 and views/day ≥ 1,000 before spinning up a second channel, and never run more channels than the operator can physically apply editorial judgment to per week.

How saturated are YouTube automation niches?

More saturated than equivalent non-automation niches, and saturating faster, because the cost-to-replicate a working automation format is much lower than for a face-on-camera or editorial-heavy format. The mechanism is direct: when an automation operator demonstrates a working format-topic combination, fifty other operators can stand up a copy production rig inside ninety days. That is not true of a face-on-camera channel whose differentiator is the host's persona. Saturation in automation lives at the format-topic intersection layer, not at the topic layer alone — the parent topic ("AI storytelling," "Reddit narration," "history shorts") may show thousands of active channels while specific sub-intersections inside each still produce breakouts. The corrective for an operator is the same diagnostic NicheBreakout applies to every cluster: check whether small channels under 45 days old at the specific format-topic intersection are clearing the three flagging gates right now, and discount the parent-topic saturation count as an indicator either way. Automation niches reward freshness more than they reward picking a name from a listicle.

What does "YouTube automation" actually mean in 2026?

Two distinct things, often conflated. "Agency-run YouTube automation" refers to the multi-channel business model — one operator or small team running several channels in parallel, each with its own niche, sharing scriptwriting templates, narration assets, editing pipelines, and thumbnail systems. "AI-tooled YouTube automation" refers to the production-stack choice — one channel using TTS, LLM-assisted scripting, AI imagery, and scheduled publishing to reduce per-upload time from a creator's working day to an hour or two. The two overlap: an agency-run automation business almost always uses AI tooling, and an AI-tooled solo operator sometimes expands into a small portfolio of channels. They are not the same thing, and listicles that treat them as identical end up recommending niches that fit one model but not the other. This page covers both interpretations and notes which niche-type fits which operator profile.

Methodology / About this analysis

NicheBreakout's research relies entirely on YouTube Data API v3 public fields: channel age, subscriber count, video count, view count, video metadata, video publish dates, video duration, and recent video performance. No YouTube Analytics API access (which is channel-owner-only), no YouTube AdSense data (which is channel-owner-only), no scraping of authenticated dashboards, and no synthesized narratives describing why specific automation channels work. The automation-cluster observations on this page are derived from the same scan that powers the main live library — no separate dataset, no inferred revenue metrics, no operator-side income claims.

Original-research artifacts in this article: the two-model taxonomy of "YouTube automation" in the opening section, the cost-to-replicate and stricter-breakout-check argument, the format-fit-over-topic argument for automation channels specifically, the deterministic flagging methodology, the live niche-cluster snapshot, and the revealed channel cards above the fold. The automation-friendly format clusters discussed reflect what we have scanned, not all of automation YouTube. Author: Nicholas Major (Founder, NicheBreakout · Software engineer since 2011). Article last revised 2026-05-12.

Live scan freshness:

Related research

- Faceless YouTube niches: the parent pillar covering faceless production modes and which faceless formats are producing breakouts.

- YouTube niche finder: pillar covering niche research across faceless, automation-style, and creator-led channels.

- YouTube Shorts trends: pillar covering Shorts-first publishing, the dominant format for automation production rigs.

- YouTube channel research: pillar covering the broader channel-discovery category.

- YouTube outlier finder: pillar covering the breakout-discovery framing applied to any channel type.

- Most profitable YouTube niches: sister cluster covering why profitability is not third-party-readable for any niche, automation-style or otherwise.

- Faceless YouTube channel ideas with AI: sister cluster covering the AI-tooling angle for faceless production.

- How to start a faceless YouTube channel: sister cluster covering the procedural starting workflow.

- Faceless YouTube niches for beginners: sister cluster covering beginner-friendly faceless niches with lower production-stack demands.

- How to do YouTube niche research: process guide covering the full niche-research workflow.

- AI story channels: programmatic topic page tracking the AI-storytelling cluster.

- Reddit story channels: programmatic topic page tracking the Reddit-narration cluster.

- History shorts channels: programmatic topic page tracking the history-shorts cluster.

- Faceless storytelling channels: programmatic topic page tracking the broader storytelling cluster.

- Quiz channels: programmatic topic page tracking the quiz/trivia cluster.

The Friday digest sends three current breakout channels every week — automation-style and creator-led both — with format fingerprints and outbound YouTube links. Free, present-tense. The live library refreshes daily and surfaces channels currently inside the 30-day window. See pricing for the current tier; subscribe to the digest free.

End of cluster

Find the automation niches currently producing breakouts today

Every channel card outbound-links to YouTube so you can audit the public metadata yourself. No revenue claims, no RPM figures, no AdSense inference — public Data API only. The live under-30-day library is the paid workflow; the Friday digest is free.