/ Cluster · How to start a faceless YouTube channel

How to start a faceless YouTube channel: a seven-step sequence that starts with format, not tools

Starting a faceless YouTube channel is a sequence of seven ordered decisions, not a tool checklist. The order is load-bearing: format selection constrains topic selection, topic constrains validation, validation constrains the production rig, and the rig constrains the first three uploads. Most guides start with tools, which is the wrong end of the sequence — the channels currently breaking out in our scans of 2,082 channels picked the format first, then the topic inside it, then the rig that fit, and only then opened a tool. This page walks the sequence from step zero to the 30-day evaluation, anchored in public YouTube Data API v3 signals. No income claims, no specific tool endorsements, no "best niche for monetization" framing.

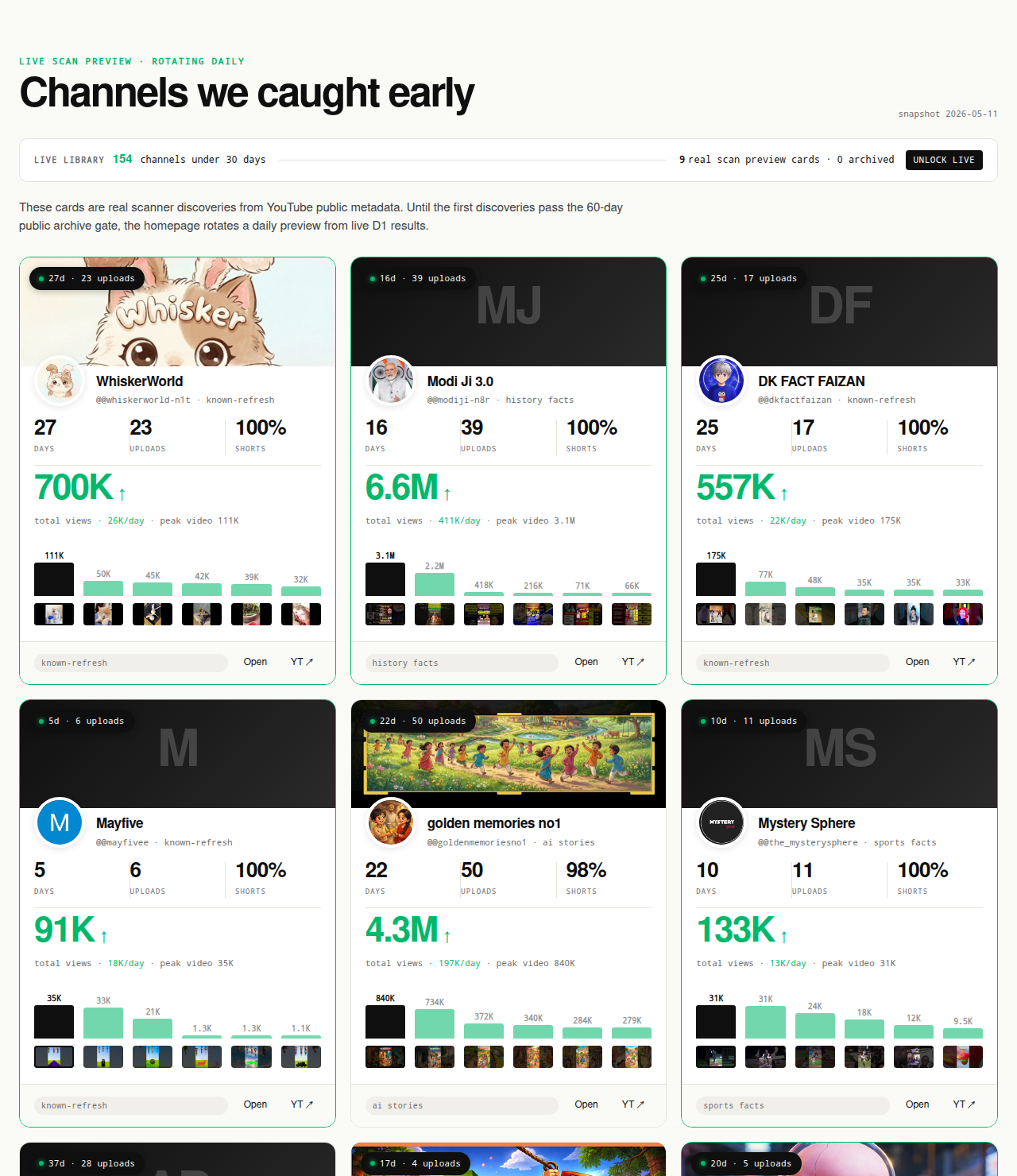

The Friday digest reveals three current breakout channels every week for free, faceless and face-on-camera both — concrete starting examples for operators running the sequence below. The live 30-day window — dozens of channels under 30 days old right now — is the paid workflow surface; the matured public archive opens as a second free surface in summer 2026 once the first cohort ages out of the live window.

Open the live library →

Why most "how to start a faceless YouTube channel" guides put the steps in the wrong order

Open the first page of search results for the head query and the structural pattern repeats. Pick a niche from a list, pick an AI tool, pick a TTS voice, write your first script, design your thumbnail, upload. Niche selection is usually the first item on the list but it is functionally an afterthought — a single paragraph naming a few categories, no validation step, no public-data check, no instruction to verify that the named categories are still lifting new entrants. The implicit assumption is that the niche is interchangeable and the tool stack is where the real channel-building happens. The implicit assumption is wrong in a falsifiable way.

YouTube's recommender does not see the operator's tool stack. It sees the published video and ranks it against a cohort defined by format and topic. The variables that determine whether the recommender lifts a new channel are upstream of every tool decision: which format-topic intersection the channel is publishing into, whether the recommender is currently audience-finding inside that intersection, and whether the first few uploads share a recognizable cohort signal. None of those variables are improved by picking a specific TTS engine, a specific image-generation model, or a specific editing tool. They are determined by the format-and-topic decision, which the standard guide format treats as interchangeable.

The corollary is that the order in which a new operator makes decisions matters more than the individual decisions. Picking a tool first locks the operator into a production rig before they know what they are producing; the rig then constrains the format, which constrains the topic, which leaves the format-topic intersection up to whatever fits the rig — typically a saturated default. Picking the format first lets the operator validate the intersection before any production capital is committed, then build the rig that matches the format, then pick the tools that fit the rig. The dependency graph runs one direction, and the standard guides run it backwards.

This page reverses the order. The seven steps below start with format selection at step one and end with the 30-day evaluation at step seven. Tools enter the workflow at step three, after the format and topic are locked. The same reordering shows up in the parent faceless YouTube niches pillar (which owns the production-mode taxonomy) and in the cross-pillar YouTube niche validation checklist (which is the binary scorecard version of the validation step). This guide is the procedural workflow that ties those reference pages into a startable sequence.

The seven-step sequence at a glance

Before the detail, the shape of the sequence. Each step has a finish line that must clear before the next step starts; the steps are ordered, not parallel.

- Pick the format before the topic. Choose from the four production-mode clusters (TTS plus stock-or-AI imagery, AI-narrated plus AI-imagery, screen-recorded explainers, voiceover plus B-roll). The format decision is the rig decision; commit before anything else.

- Validate the format-topic intersection. Confirm that three small channels under 90 days old are currently breaking out inside the format-topic intersection you are considering. Use the deterministic filter from the live cohort; if you cannot name three current breakouts, the intersection is not validated.

- Set up the production pipeline for one format only. Build the rig that produces the format you committed to in step one. One rig, one format. Resist the temptation to make the rig flexible across multiple formats.

- Lock the channel-level format signal. Banner, channel art, recurring TTS voice (or recurring human voice), thumbnail template, upload cadence, and naming convention all match the recommender-readable patterns of the small breakouts you validated in step two.

- Configure YouTube settings for monetization eligibility and AI-content disclosure. Channel-level content type, default upload settings, the altered-or-synthetic-content disclosure toggle for the relevant videos, and the original-content compliance posture all set before the first upload.

- Publish the first three uploads consistently in the same format. Same format, same approximate length, same visual template, same TTS or voice. No drift across the first three uploads. The recommender's first read of the channel happens here; the read needs an unambiguous format signal.

- Measure against the deterministic gates after 30 days. Compute the channel against channel age ≤ 45 days, first-5 sum ≥ 10,000 views, and lifetime views per day ≥ 1,000. The gates are the same gates the live library applies to every channel it surfaces.

Each step has its own section below. The sections close with the explicit finish line for the step. Do not move forward until the finish line clears.

Step 1: Pick the format BEFORE the topic

The format decision is the rig decision. Faceless production splits cleanly into four production-mode clusters; each one determines a different rig, a different per-upload cost curve, a different cadence ceiling, and a different recommender cohort. Picking the format first is the decision that locks every downstream constraint in the sequence.

TTS plus stock-or-AI imagery. The lowest-cost rig. A neural TTS voice reads a script over Pexels or Pixabay footage, or over AI-generated stills assembled into a scrolling visual track. Cadence ceiling is high; production cost per upload is low; the format is well-suited to Shorts-first publishing. The format cluster is also the most copy-dense, because cost-to-replicate is low. Channels currently breaking out inside this cluster are differentiating on script editorial choice, not on production polish.

AI-narrated plus AI-imagery. Synthetic narration over AI-generated imagery, end-to-end. The rig is higher-cost per upload because the imagery generation step is non-trivial to direct toward visual consistency across a series. Cadence ceiling is medium-to-high. The format triggers YouTube's altered-or-synthetic-content disclosure rule when the imagery depicts real people, real events, or could be mistaken for documentary footage; in fictional contexts the rule does not trigger. The cluster's most reliable breakouts in our scans are story-format channels where the AI imagery is clearly stylized and the narrative is original.

Screen-recorded explainers. The screen is the subject. Tutorials, software walkthroughs, gameplay analysis, financial chart breakdowns, prompt-engineering demonstrations. The rig is the lowest visual-variety option of the four — every video looks structurally similar because the capture method is the same — but the editorial skill floor is the highest, because the explanation has to be clear inside seconds. Cadence ceiling depends on the topic; tutorial-style channels publish at lower cadence than the TTS clusters but reach higher per-viewer monetization.

Voiceover plus B-roll. The most editorial of the four. Human-recorded narration over curated footage, no AI synthesis, the closest faceless mode to traditional documentary editing. Cadence ceiling is low (a single 10-minute upload can take several days of editorial work). Per-upload cost is highest of the four. The format is the slowest to scale and the hardest to template, which is also why it is the cluster where editorial taste is the differentiator and copy waves are slowest to arrive.

Pick one. Pick it for a specific reason — operator constraint, format cadence you can sustain, editorial bandwidth you actually have. Do not pick two thinking the rig will be flexible; one rig produces one format, and the operator who tries to run two rigs at the start typically produces inconsistent output in both.

Finish line for step 1: you can write the name of your committed format in a single sentence ("TTS plus AI imagery, vertical Shorts under 60 seconds, four uploads per week") and you have a reason for that choice that survives a follow-up question.

Step 2: Validate the format-topic intersection has current small-channel breakouts

The validation step is what separates a procedural guide from a brainstorming exercise. Before any production capital commits, the operator confirms that the format-topic intersection they have chosen is currently lifting new entrants — not that it lifted entrants two years ago, not that the parent topic is "hot" in listicle copy, but that small channels under 90 days old running the same format inside the same topic are currently clearing the three deterministic gates.

The intersection is the unit of validation, not the topic alone. "History" as a topic spans 8-minute documentary uploads, 45-second vertical TTS Shorts, AI-narrated cinematic montages, and face-on-camera lecture formats. Each is a different cohort to the recommender. The same logic applies to AI storytelling, true crime, finance, scary stories, quiz, gaming, and every other faceless-eligible topic. The validation has to resolve at the intersection layer, not at the topic layer.

The deterministic filter NicheBreakout applies to every channel in the live library is the same filter the validation step uses:

Channel age

detected within 45 days of channel creationFirst-5 upload views

combined views across the first five public uploads ≥ 10,000Views per day

lifetime channel views ÷ channel age ≥ 1,000Format clarity (bonus)

score weights channels with a clear Shorts-first or long-form-first ratio above mixed-format channelsEarly-traction velocity (bonus)

score boost when channel age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000

Operationalize the validation in twenty minutes. Open the YouTube site and the relevant programmatic topic page on NicheBreakout — AI story channels for AI-narrated storytelling, Reddit story channels for Reddit-narration formats, history shorts channels for history-format Shorts, faceless storytelling channels for the broader storytelling cluster, quiz channels for trivia formats. Find three channels in the relevant page that are under 90 days old, share the format you committed to in step one, and clear all three hard gates. Verify the gates by reading the public fields on the About tab (channel age, total views) and the Videos tab (upload count, first five upload view sums). The full binary scorecard is on the cross-pillar YouTube niche validation checklist; this step is the equivalent procedural pass.

If three current small breakouts cannot be named at the format-topic intersection, the intersection is not validated. The corrective is not to spend more time researching the same intersection; it is to narrow the topic inside the same format (history shorts under 60 seconds with cinematic visuals, instead of just "history shorts") and re-run the validation, or to move to a different topic that admits the format you committed to.

Finish line for step 2: you can name three channels under 90 days old that share your committed format inside your intended topic, each clearing all three hard gates (channel age ≤ 45 days, first-5 sum ≥ 10,000 views, lifetime views per day ≥ 1,000), with at least one of the three under 30 days old.

Step 3: Set up the production pipeline for ONE format

The rig is downstream of the format. With the format locked at step one and the format-topic intersection validated at step two, step three builds the production pipeline that produces uploads inside that specific format. The non-negotiable constraint here is single-format commitment: the rig is built for one format, not several. An operator who builds a flexible multi-format rig at the start is building a rig that produces ambiguous output and a recommender signal that the cohort cannot read.

The pipeline shape is the same across all four format clusters: script, narration, visual layer, edit, thumbnail, upload. The decisions inside each step differ by format. For a TTS-plus-imagery rig, the script length is 130 to 160 spoken words per 60-second Short and the thumbnail iteration is the variable that moves view-through rate most. For a screen-recorded explainer, the script is a structured outline rather than a word-for-word document, and the thumbnail is captured from the recording. For voiceover plus B-roll, the script is fully written before narration is recorded and the visual layer is curated rather than generated.

This page does not endorse specific tools by brand. The tool market shifts quarterly inside every category, and a tool list dated 2024 is partially obsolete by 2026. The category structure is the durable layer; the tool selection inside each category is the quarterly-churn layer. The sister cluster faceless YouTube channel ideas with AI covers the five tooling categories (TTS, AI imagery, AI video, AI scripting, AI editing) in detail for AI-faceless rigs specifically.

Whichever tools the operator picks inside the category structure, the rig the operator commits to should be testable end-to-end before the first publish. Produce a sample upload — script, narration, visual layer, edit, thumbnail — and run it through the same pipeline the channel will use for the next thirty uploads. If the sample takes more time than the cadence allows, the rig is wrong for the cadence; either the rig needs simplification or the cadence needs adjustment. Discover the constraint before publish, not after upload five.

The single-format constraint also applies to the operator's editorial capacity. A new faceless operator running a Shorts-first rig at four uploads per week is committing to four script-write-narrate-edit-thumbnail cycles per week. That is a lot of weekly hours for a solo operator; build the rig with explicit time blocks rather than aspirational scheduling. The rig that fits in actual operator hours is the rig that survives to step seven.

Finish line for step 3: the production pipeline is built end-to-end, the operator has produced one sample video that ran through the full pipeline, and the time per upload at the planned cadence fits inside actual available operator hours.

Step 4: Lock the channel-level format signal

The recommender reads the channel as a whole, not just individual uploads. Channel art, channel description, recurring narration voice, thumbnail template, title formula, and upload cadence are the channel-level signal that the recommender uses to classify the channel into a format cohort before it has enough watch-time data to evaluate individual uploads on their own merits. A channel whose channel-level signal is ambiguous starts behind a channel whose channel-level signal is unambiguous, regardless of upload quality.

Read the channel-level signal off the small breakouts you validated in step two. What does the channel banner look like — is there a recurring visual motif, a format-indicating element (a Shorts indicator, an aesthetic that signals the topic), a name format? What does the thumbnail template look like — same aspect ratio, same overlay pattern, same color palette, same typography? What is the upload cadence — daily, every other day, three times per week, weekly? What is the title formula — question, list, declarative statement? Each of these is recommender-readable evidence of channel-level format clarity.

Match the channel-level signal to the patterns of the small breakouts, not to the patterns of established mega-channels. A 500,000-subscriber channel running a polished branded thumbnail template is using design capacity that the small breakouts did not have when they started; the small breakouts' channel-level signal is what the recommender lifted, and that is the recoverable signal for a new operator entering the same intersection.

Voice consistency deserves a separate note because it is the most common faceless-channel-level signal violation in our scans. A faceless channel running three different TTS voices across its first five uploads is publishing as three different channels from the recommender's point of view; the audience signal that drives subscriber-to-view conversion does not accumulate. Commit to one TTS voice per channel, or at most one voice per recurring format inside a channel, and treat voice selection as a permanent channel decision rather than a per-upload variable.

Upload cadence is also a channel-level signal. The breakouts that surface at high publish cadence (four-plus uploads per week) teach the recommender a high-frequency audience profile; the breakouts that surface at lower cadence teach a different one. Match the cadence to what the validated breakouts are running and to what the rig from step three can actually sustain for 30 uploads.

Finish line for step 4: the channel's banner, description, recurring voice, thumbnail template, title formula, and stated upload cadence all match the recommender-readable patterns of the small breakouts you validated in step two, and the cadence is one the operator can sustain for at least the first 30 uploads.

Step 5: Configure YouTube settings for monetization eligibility and AI-content disclosure

YouTube's platform-side compliance posture is configured per channel and per upload. Two surfaces matter at startup. The original-content policy governs whether the channel can apply for monetization at all (the Partner Program eligibility threshold gates revenue, but the original-content rule gates eligibility on top of that). The altered-or-synthetic-content disclosure rule governs which uploads need the Creator Studio disclosure label.

The original-content rule is documented in YouTube's reused-content policy (YouTube Help: Reused content policy). The rule disallows uploading content that "isn't yours" — clips, content from social media, or songs — without significant original commentary or value-add. For faceless creators specifically, the rule applies to channels that re-upload other creators' material with a TTS voiceover added, channels that stitch together clips from other YouTube videos without transformative editorial work, and channels that mass-produce templated uploads with no original creative work per video. Faceless channels with original scripts, original narration choices, and edited visuals are inside policy; the policy targets the lazy-template pattern, not the faceless production mode.

The altered-or-synthetic-content disclosure rule is documented at the YouTube Help Center (YouTube Help: Disclosing use of altered or synthetic content). The rule applies to content that could mislead a viewer into thinking the video depicts a real person, place, or event when it does not. Specifically: AI imagery depicting a real person, AI voice cloning of an identifiable person, AI imagery of real events presented as documentary footage. Generic AI imagery in a clearly fictional story does not trigger the rule. Generic synthetic narration in a generic script does not trigger the rule. The Creator Studio toggle for "altered or synthetic content" is the disclosure mechanism; the toggle produces a label under the video.

The startup configuration is one-time per channel for the channel-level settings (channel content type, default category, default language, default monetization preferences inside the YPP application surface) and per-upload for the disclosure toggle. The cost of disclosure on uploads that need it is minimal; the cost of failing to disclose can include content removal or monetization restrictions. The cost-benefit is unambiguous, and faceless creators unsure whether a specific upload triggers the disclosure rule should disclose by default.

The Partner Program eligibility thresholds (1,000 subscribers and 4,000 watch hours in 12 months, or 1,000 subscribers and 10 million Shorts views in 90 days) apply identically to faceless and face-on-camera channels. The eligibility application happens after the channel clears the thresholds, not at channel creation; configuring monetization preferences at startup is mostly defaults and disclosures rather than active applications.

Finish line for step 5: the channel-level content type and default settings are configured, the operator understands which uploads in the planned series will need the altered-or-synthetic-content disclosure toggle and has a default plan to enable it where it applies, and the operator can describe in one sentence why the production pipeline produces original work per upload.

Step 6: Publish the first three uploads consistently

The first three uploads are the recommender's first read of the channel. The format-clarity bonus in NicheBreakout's score formula reflects an observable pattern in our scans: channels whose first three uploads share a recognizable format accumulate first-five-video traction faster than channels whose first three uploads drift across formats. The recommender needs an unambiguous signal to classify the channel into a cohort; ambiguity at the start delays classification and slows audience-finding.

"Consistently" is operationalized as same format, same approximate length, same visual template, same narration voice, same thumbnail style across the first three uploads. Not identical content — varying topic inside the format is good — but identical format. A new TTS-Shorts channel publishing three TTS Shorts in three different topics inside the same niche is signaling a clean format cohort. The same channel publishing one TTS Short, one long-form documentary, and one screen-recorded explainer is signaling a mixed-format channel even if all three uploads are inside the same topic.

The most common faceless mistake in our scans at this step is exactly this drift. An operator publishes the first upload in the format they committed to, sees the upload land under expectations after a few days, and on impulse publishes a different-format upload to "see if something else works." The drift breaks the cohort signal and converts the channel from a single-format experiment into an indefinite test. The corrective is to lock the first three uploads as a single experiment — same format across all three — and hold the format until at least the third upload is published.

Cadence inside the first three uploads should also match the cadence committed in step four. A channel that announces a four-uploads-per-week cadence and then publishes the first upload, waits two weeks for an arbitrary reason, and then publishes the second upload is signaling a different cadence than the one declared. The recommender does not read the announcement; it reads the actual upload timestamps.

Thumbnails on the first three uploads carry disproportionate weight because they are the first impressions any audience the recommender surfaces the channel to will form. Iterate the thumbnails — test variations on the same upload if the platform allows, or treat each of the three thumbnails as a deliberate sample inside a template — but iterate inside the template rather than across templates. A consistent thumbnail template with varying content is a clean signal; three thumbnails in three different visual languages is a noisy one.

The cluster-mix snapshot below shows which clusters are currently producing breakouts; new operators publishing their first three uploads should match the format-cluster patterns visible in this snapshot for the cluster they validated against in step two:

Finish line for step 6: three uploads published, all in the same format, at the cadence committed in step four, with thumbnails inside a single recognizable template and titles following a single recognizable formula. No mid-sequence format change, no cadence slippage beyond a 48-hour tolerance.

Step 7: Measure against the deterministic gates after 30 days

The 30-day mark is the first verdict. With three uploads published in the same format at the committed cadence, the channel has presented a coherent signal to the recommender for approximately a month. The verdict is run against the same three hard gates the live library applies to every channel it surfaces — the public-data-readable proxies for early-traction quality:

Channel age

detected within 45 days of channel creationFirst-5 upload views

combined views across the first five public uploads ≥ 10,000Views per day

lifetime channel views ÷ channel age ≥ 1,000Format clarity (bonus)

score weights channels with a clear Shorts-first or long-form-first ratio above mixed-format channelsEarly-traction velocity (bonus)

score boost when channel age ≤ 14 days, first-5 sum ≥ 50,000, or views/day ≥ 5,000

Run each gate manually on the new channel. Channel age comes from the About tab. First-5 sum is the sum of view counts on the first five published videos; if the channel has not yet reached five uploads at the 30-day mark, extend the evaluation to whichever upload count actually exists, but the threshold scales: three uploads sharing 6,000 views is the rough equivalent of five uploads sharing 10,000. Lifetime views per day is the total channel view count divided by channel age in days.

Three possible verdicts at the 30-day mark. All three gates cleared. The format-topic intersection is producing public-data signal that matches the small breakouts validated in step two; continue the cadence for at least the next 30 uploads and re-run the evaluation at the 60-day mark. One or two gates cleared, the others narrowly missed. The format-topic intersection is producing signal but the cohort fit is not yet unambiguous; iterate on thumbnails, hook structure, and title formula inside the same format for the next 10 uploads, then re-evaluate. All three gates missed by a wide margin. The format-topic intersection is not producing the public-data signal that small breakouts in the same cohort produced at the same channel age; the corrective is either to narrow the topic inside the same format and re-validate, or to retire the channel and restart the sequence from step one with a different format-topic intersection.

The average first-five-video views across populated grade tiers inside the current discoveries cohort gives a comparison reference for new operators reading their own first-five sum against the public-data distribution (grades with no current members are suppressed until they fill in):

The verdict is a process input, not a final judgment. Channels that miss the gates at 30 days are not permanently failed; they are signaling that the current format-topic intersection or the current execution is not producing recommender lift, and the corrective path is iteration inside the same format or pivot to a different intersection. Channels that clear the gates at 30 days are not permanently succeeded; they are entering the second window where the cadence-quality discipline of step three and step four has to hold for at least another 30 days for the early-traction signal to compound into Partner Program eligibility traction. The 30-day evaluation is the start of the iteration loop, not the end of the startup sequence.

Finish line for step 7: the three gates have been computed against the new channel, the verdict has been written down with the specific numbers, and a concrete corrective or continuation path for the next 30 uploads has been decided.

What we deliberately don't include in this guide

This guide does not include income projections, monthly-revenue claims, or any "this faceless niche pays $N RPM" framing. Per-channel revenue, RPM, and CPM live behind the YouTube Analytics API and YouTube AdSense, both of which are channel-owner-only endpoints. A third-party guide cannot read AdSense reporting for any channel the author does not own, which is the report that would actually answer the income question. Guides that name an AI tool, name a niche, and quote a monthly income figure for the combination are stacking three claims on top of each other, none of which the public data supports. The full boundary statement lives on the most profitable YouTube niches analysis; this guide holds itself to the same standard.

This guide does not endorse specific AI tools, TTS engines, image-generation models, or editing platforms by brand. The tool market inside each category — TTS, AI imagery, AI video, AI scripting, AI editing — shifts quarterly, and any specific tool list dated to a particular calendar year is partially obsolete within two quarters. The category structure (which categories exist, what each category does, what tradeoffs each category has) is the durable layer worth covering; the specific brand inside each category is the quarterly-churn layer that re-publishing this page once a year cannot keep current with.

This guide does not claim that any specific faceless niche is "best for monetization" or "most profitable for beginners." The diagnostic that survives the saturation question is whether small channels are currently breaking out at the format-topic intersection, not whether the topic was top of a 2024 listicle. The closest defensible substitute for a "best niche" claim is the cluster-density observation from the public-data scan — which clusters are producing the most current small-channel breakouts — and that observation is a present-tense snapshot that shifts week to week, not a stable ranking.

This guide does not include a list of "ten faceless YouTube ideas to try." The parent faceless YouTube niches pillar is the listicle layer; the cross-pillar YouTube Shorts ideas without showing face page is the Shorts-specific listicle. This guide is the procedural workflow that operates on whichever niche the operator has selected from those listicles or from their own research. Restating the listicles inside this guide would duplicate the parent pages without adding the procedural specificity that justifies a separate cluster page.

This guide does not claim to predict whether any specific new channel will succeed. Validation under public-data signals confirms that the format-topic intersection is currently producing breakouts; it does not guarantee that the operator's specific channel will be one of those breakouts. Execution variables — script craft, thumbnail iteration, cadence discipline, channel-level format consistency — still determine the outcome. The seven-step sequence is a precondition for publishing into a niche the recommender is lifting; it is not a prediction that any one channel will be lifted.

Common mistakes new faceless creators make

Five mistakes recur in our scans of new faceless channels failing to clear the 30-day gates. The pattern is consistent enough across operators that the mistakes are diagnostic — a new channel that hits one of them is more likely to fail the gates than a new channel that avoids them.

Picking the topic before the format. The operator decides the channel will be "about history" or "about finance" or "about scary stories" before deciding which production rig will run it. The topic decision then leaves the format up for grabs, which usually means the format gets chosen by whichever rig the operator can stand up first. The recommender ranks at the format-topic intersection layer, so a topic-first decision produces an undirected format choice and a noisy cohort signal. The corrective is the step-one ordering: format commitment first, topic inside the format second, and the rig built to fit the format-topic pair.

Using inconsistent TTS voices across uploads. A faceless operator with access to a voice library of fifty distinct TTS voices rotates voices upload-to-upload, sometimes within the same series. The recommender and the audience read voice consistency as a channel signature, the same way a face-on-camera channel's host is a channel signature. Switching voices breaks the recurring-audience signal. The corrective is to commit to one voice per channel and treat voice selection as a permanent channel decision rather than a per-upload variable.

Copying a viral channel's topic without copying its format. The operator reads a case study about a viral history channel running 45-second AI-narrated Shorts, opens a "history channel," and produces 12-minute long-form documentaries with human voiceover instead. The topic matches; the format does not. The recommender treats the new channel as a different cohort than the viral reference, the breakout evidence does not apply, and the operator concludes that "history doesn't work anymore" when the actual problem is that history-shorts and history-long-form are different cohorts and the evidence was only about one of them. The corrective is to copy the production mode, video length, publish cadence, and visual style of the reference channel — not the topic list alone.

Ignoring the AI-disclosure setting on uploads that require it. A faceless operator running AI-narrated content with AI-generated imagery skips the altered-or-synthetic-content disclosure toggle in Creator Studio because the upload form is one extra click. The disclosure has minimal effect on watch time. Failing to disclose, when the upload's content triggers the rule, can result in content removal or monetization restrictions; the disclosure is cheap and the omission is expensive. The corrective is to disclose by default whenever the upload contains AI imagery depicting real people, AI voice cloning of identifiable people, or AI-generated content that could be mistaken for documentary footage. When uncertain, disclose.

Publishing too few uploads before evaluating. The operator publishes one upload, watches it for three days, decides the channel is not working, and either pivots format or abandons the channel entirely. One upload does not produce enough recommender training signal to support any verdict either way. The first verdict is at three uploads (format-clarity verification) and the second is at 30 days (deterministic-gate evaluation). Operators who collapse the evaluation window to one upload are reading noise as signal. The corrective is the step-seven discipline: do not evaluate against the deterministic gates until the 30-day mark with three or more uploads published in the same format.

FAQ

How do I start a faceless YouTube channel?

Run a seven-step sequence in this order: pick the format before the topic, validate that the format-topic intersection has current small-channel breakouts under public Data API v3 signals, set up the production pipeline for one format only, lock the channel-level format signal (banner, voice, thumbnail template, upload cadence), configure YouTube's content and AI-disclosure settings, publish the first three uploads in the same format without drift, and measure the channel against the three hard gates (channel age ≤ 45 days, first-5 sum ≥ 10,000 views, lifetime views per day ≥ 1,000) at thirty days. The order is load-bearing because each step constrains the next; reversing format and tool selection is the most common mistake in our scans, and it shows up as a mixed-format channel the recommender cannot classify.

How much does it cost to start a faceless YouTube channel?

Per-upload production cost in 2026 spans a wide range depending on the format you commit to. A TTS-plus-stock-footage Shorts rig can produce uploads at low per-clip cost using free-tier voice synthesis, free stock libraries, and free editing software. A voiceover-plus-B-roll long-form rig costs more per upload because the editorial pass takes longer and licensed footage carries a per-clip license fee. An AI-imagery-driven narration rig sits between the two on per-upload cost but adds a monthly subscription floor for the imagery and TTS services. None of the three is the cheapest in every scenario, and the relevant cost question is per-upload total at the cadence you can sustain for 30 uploads, not the lowest theoretical entry cost. Cost-to-publish-the-first-upload is the wrong number to optimize; cost-per-upload across the first thirty uploads is the right one.

Can I start a faceless YouTube channel for free?

The platform side is free: opening a YouTube channel, uploading videos, and applying to the Partner Program costs nothing. The production side has a free path for some formats — screen-recorded explainers using OBS for capture and a free editor, TTS Shorts using a free-tier neural voice and a public-domain footage library, slideshow-style fact stacks built in free presentation software. None of those are unique to faceless YouTube; the same free tooling has been available for years. The relevant constraint is sustainability across thirty uploads at format-consistent quality, not whether the rig is free for one upload. A free rig that produces ten uploads and then breaks on the eleventh because a paid tool was needed for a specific feature is worse for channel-level format clarity than a low-cost rig that runs the same way every upload.

Do I need to show my face on YouTube?

No. Faceless production has been mainstream on YouTube for years and the recommender does not penalize channels for the absence of a host on camera. What does matter is format consistency at the channel level. A face-on-camera channel and a TTS-narrated Shorts channel are two different recommender cohorts even in the same topic; switching between them inside a single channel is what the recommender penalizes, not the choice of one over the other. The decision the operator is actually making when they ask the face-vs-faceless question is which production rig they are committing to for the first 30 uploads. The rig matters; the surface-level binary of face or no-face is a downstream consequence.

What's the easiest faceless YouTube niche for beginners?

The honest answer is that no single niche is universally easiest, because difficulty depends on the operator's actual constraints. A niche that is easy for an operator with strong editorial taste and limited production tooling (curated historical fact stacks, narrative scary stories, original short essays read over still imagery) is hard for an operator without script-writing capacity. A niche that is easy for an operator with high-cadence templated production (quiz Shorts, list-of-X verticals, trivia formats) is hard for an operator without that throughput. The dedicated beginner-focused sister cluster page covers low-complexity entry points specifically — once that page is live, it will be the right starting reference. The shortcut diagnostic that survives the question is to pick the format-topic intersection where small breakouts are currently showing up under the public-data signals and where you can credibly produce thirty consecutive uploads in the format the breakouts are using, with the tooling you actually have today.

How long does it take a faceless channel to grow?

The first signal lands inside the first five uploads. If the combined view count across the first five videos clears 10,000 inside the first 30 days, the recommender is picking up the format and the channel has an early-traction trajectory. Sub-1,000 views per video across the first five suggests the format-topic fit is off, the thumbnails are not clicking, or the niche is saturated at the level the channel is operating. Reaching the YouTube Partner Program eligibility thresholds (1,000 subscribers and 4,000 watch hours in 12 months, or 1,000 subscribers and 10 million Shorts views in 90 days) is downstream of clearing the early-traction signal; channels that do not clear the first-5 sum gate inside the first 30 days are almost never the channels that hit the eligibility thresholds a year later. The relevant timeline for an operator is 30 days to first verdict, not 12 months to monetization eligibility.

Do I need AI to start a faceless channel?

No. Faceless production predates the AI-tooling wave by years — TTS narration, stock footage edits, screen-recorded explainers, and voiceover documentary formats all worked before generative AI was widely available and all still work without it. AI tooling lowers per-upload production cost inside several format categories, which can let a solo operator publish at higher cadence; that cadence advantage is real and worth understanding (the sister cluster page on faceless YouTube channel ideas with AI covers it in detail). But AI is not a prerequisite. A faceless channel running human voiceover over B-roll footage clears the same three flagging gates as an AI-narrated Shorts channel when the underlying format-topic fit is right. The decision the operator is making is which production rig they can sustain for 30 uploads; whether that rig uses AI is a secondary question downstream of that primary commitment.

How many uploads should I publish before evaluating?

Three uploads is the floor for an evaluation; ten uploads is the more reliable read. The recommender needs a consistent format-topic signal across multiple uploads before it can train an audience model for the channel, and one upload is not enough signal to train against. Three same-format uploads is the minimum before the channel has presented a coherent format identity; ten uploads is when the recommender's audience model has had time to train, evaluate, and adjust. The operators who get this wrong in our scans either evaluate after one upload (which produces no usable signal either way) or never commit to a finish line at all (which converts the channel into an indefinite experiment). The seven-step sequence on this page sets the explicit finish line at three uploads for format-clarity verification and at 30 days for the deterministic-gate verdict.

Methodology / About this analysis

NicheBreakout's research relies entirely on YouTube Data API v3 public fields: channel age, subscriber count, video count, view count, video metadata, video publish dates, video duration, and recent video performance. No YouTube Analytics API access (which is channel-owner-only), no YouTube AdSense data (which is channel-owner-only), no scraping of authenticated dashboards, no production-stack inference at the tool layer, and no synthesized narratives describing why specific channels work. The seven-step sequence on this page is derived from observable patterns across the same scan that powers the main live library — no separate dataset, no inferred revenue metrics, no tool attribution.

Original-research artifacts in this article: the seven-step ordered sequence with explicit finish lines, the format-before-topic argument applied as a procedural rule rather than as advice, the four-mode production taxonomy mapped to operator-fit constraints, the deterministic flagging methodology applied as the 30-day verdict, and the live niche-cluster snapshot. The procedural sequence reflects what we have observed across 2,082 scanned channels, not all of faceless YouTube. Author: Nicholas Major (Founder, NicheBreakout · Software engineer since 2011). Article last revised 2026-05-12.

Live scan freshness:

Related research

- Faceless YouTube niches: the parent pillar covering faceless production modes and which faceless formats are producing breakouts across the broader cohort.

- Faceless YouTube channel ideas with AI: sister cluster covering the AI-tooling angle and the five-category production stack referenced in step three.

- YouTube automation niches: sister cluster covering the operator-workflow angle for agency-run multi-channel and AI-tooled solo models.

- Faceless YouTube niches for beginners: sister cluster covering beginner-friendly faceless niches with lower production-stack demands (planned).

- YouTube Shorts ideas without showing face: lateral cluster covering the Shorts-format intersection with faceless production.

- YouTube niche finder: pillar covering niche research across faceless, AI-assisted, and creator-led channels.

- YouTube niche validation checklist: cross-pillar checklist — the binary scorecard version of the validation step in this guide.

- How to do YouTube niche research: cross-pillar process guide covering the full niche-research workflow upstream of this sequence.

- YouTube Shorts trends: pillar covering Shorts-first publishing, the dominant format for new faceless rigs.

- YouTube channel research: pillar covering the broader channel-discovery category, useful for sourcing the three breakout channels step two requires.

- YouTube outlier finder: pillar covering the breakout-discovery framing applied to any channel type.

- Most profitable YouTube niches: sister cluster covering why per-niche profitability is not third-party-readable and what public-data signal does answer the niche-fitness question.

- AI story channels, Reddit story channels, history shorts channels, faceless storytelling channels, quiz channels: programmatic topic pages that pre-cluster current breakouts by format, useful as the input source for the validation step.

The Friday digest sends three current breakout channels every week — faceless and face-on-camera both — with format fingerprints and outbound YouTube links. Three named channels per week is enough raw input to run the validation step on multiple candidate intersections. The live library refreshes daily and surfaces channels currently inside the 30-day window across every niche cluster we scan. See pricing for the current tier; subscribe to the digest free.

End of cluster

Run the seven-step sequence against a current breakout cohort

Every channel card outbound-links to YouTube so a new operator can read off the format, cadence, and visual style the breakout is running before committing the rig. No income claims, no tool endorsements — public Data API only. The live under-30-day library is the paid workflow; the Friday digest is free.